This is the full developer documentation for Blober

# Lost in Space?

> That page doesn't exist. Let's get you back to the docs.

# Back Up Your GoPro Cloud to Backblaze B2, AWS S3, or Local Storage

> Blober is the only tool that connects to GoPro Cloud. Move your GoPro footage to affordable, long-term storage before it's too late.

### The Problem

[Section titled “The Problem”](#the-problem)

GoPro’s cloud storage (GoPro Plus / GoPro Premium) offers unlimited storage for GoPro camera media. It’s a great perk - until you want your footage somewhere else.

The reality for most GoPro users:

* **Painfully limited batch download** - GoPro’s web portal caps batch downloads at 25 files at a time, bundled into a ZIP. Large batches frequently fail or time out, and metadata like GPS data may be stripped during compression

* **No third-party tool support** - rclone, MultCloud, Flexify, and every other transfer tool do *not* support GoPro Cloud

* **Subscription dependency** - cancel GoPro Plus and your cloud access disappears. Your footage remains hostage to a recurring charge

* **No “Download All” option** - if you have hundreds or thousands of files, you’re stuck doing dozens of 25-file batch downloads manually, hoping none fail

GoPro community forums are filled with users asking the same question: *“How do I download all my GoPro Cloud content at once?”* - and the practical answer is: not without hours of manual work and frequent failures.

[**Blober**](https://blober.io/) changes that.

***

### Blober: The Only Tool That Connects to GoPro Cloud

[Section titled “Blober: The Only Tool That Connects to GoPro Cloud”](#blober-the-only-tool-that-connects-to-gopro-cloud)

[**Blober**](https://blober.io/) is the **only** desktop application that integrates with GoPro’s cloud storage. No other migration tool - free or paid - supports GoPro Cloud as a source or destination.

With Blober, you can:

* **Browse all your GoPro Cloud media** - photos and videos, organized by date, camera, and type

* **Download everything at once** to your local drive, NAS, or external HDD

* **Transfer directly** to Backblaze B2, AWS S3, Wasabi, Cloudflare R2, Azure Blob Storage, or DigitalOcean Spaces

* **Use metadata-based path templates** to auto-organize files (e.g., by camera model, capture date, resolution)

* **Resume interrupted transfers** - no need to start over if your connection drops

***

### Why Back Up GoPro Cloud?

[Section titled “Why Back Up GoPro Cloud?”](#why-back-up-gopro-cloud)

#### 1. Subscription Lock-In

[Section titled “1. Subscription Lock-In”](#1-subscription-lock-in)

GoPro Plus costs \~$49.99/year. As long as you pay, your footage stays accessible. The moment you cancel, your cloud media goes offline. For years of footage, that’s a dangerous bet on a single subscription.

#### 2. No Redundancy

[Section titled “2. No Redundancy”](#2-no-redundancy)

GoPro Cloud is your only copy in the cloud. There is no built-in backup, no versioning, no geographic replication. If GoPro ever changes their terms, shuts down the service, or experiences data loss - your footage is gone.

#### 3. Cost Optimization

[Section titled “3. Cost Optimization”](#3-cost-optimization)

Long-term archival storage costs a fraction of ongoing subscriptions:

| Storage Option | Cost for 1 TB/year | Egress Fees |

| ----------------- | --------------------------- | ----------------------- |

| GoPro Plus | \~$49.99/year (ongoing) | N/A (limited downloads) |

| Backblaze B2 | \~$72/year ($6/TB/mo) | Free up to 3x stored |

| Wasabi | \~$83.88/year ($6.99/TB/mo) | Free |

| AWS S3 (Standard) | \~$276/year | $0.09/GB |

| Local NAS | One-time HDD cost | Free |

For most GoPro users, Backblaze B2 or Wasabi combined with a Blober one-time license is the most cost-effective long-term strategy.

#### 4. You Own Your Footage

[Section titled “4. You Own Your Footage”](#4-you-own-your-footage)

Your GoPro footage is yours. Keeping it locked behind a single provider’s subscription model is not ownership - it’s rental. Backing it up to storage you control gives you true data sovereignty.

***

### How It Works

[Section titled “How It Works”](#how-it-works)

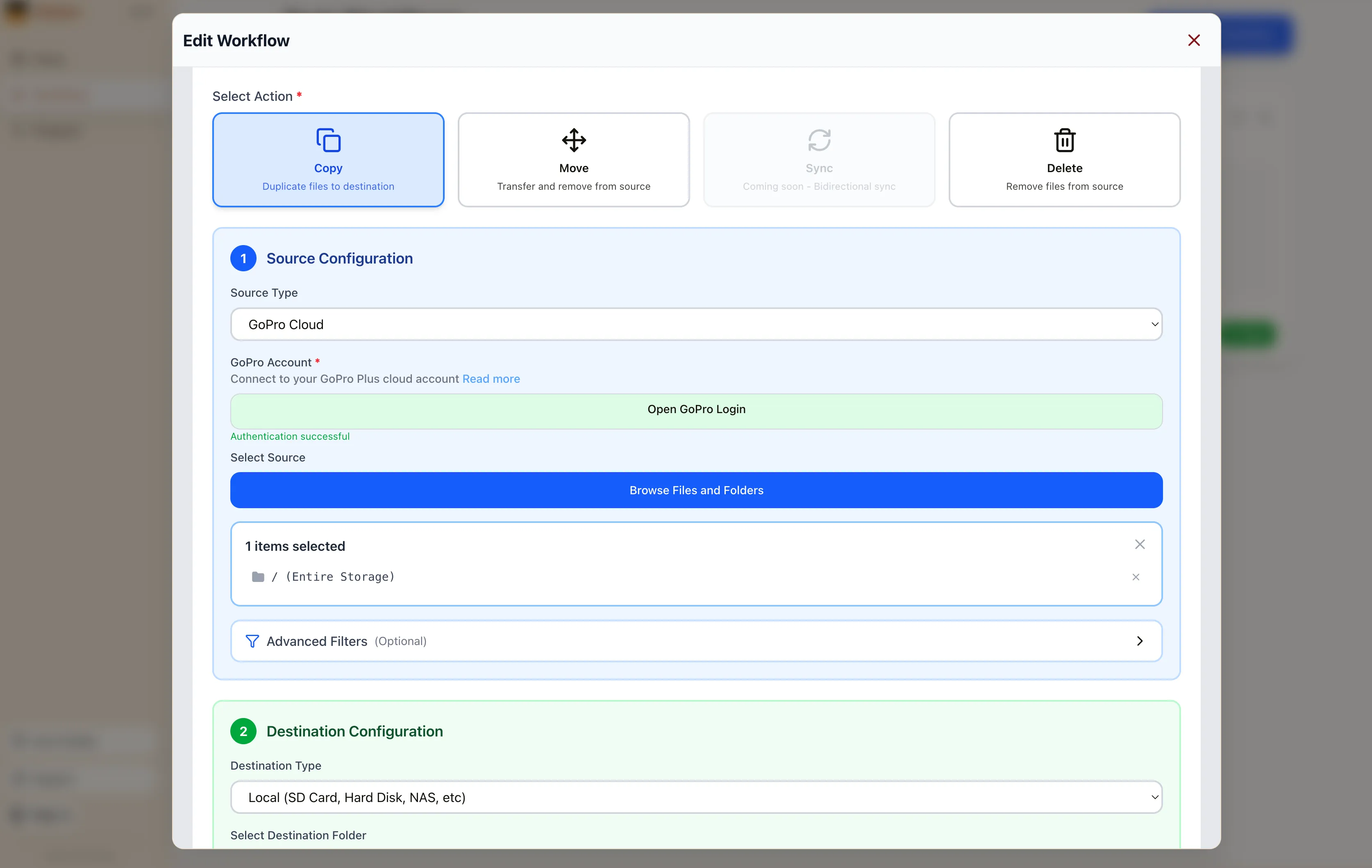

#### Step 1: Connect GoPro Cloud in Blober

[Section titled “Step 1: Connect GoPro Cloud in Blober”](#step-1-connect-gopro-cloud-in-blober)

1. Open [**Blober**](https://blober.io/) and create a new workflow

2. Select **GoPro** as the source

3. Click **Open GoPro Login** - a browser window opens

4. Sign in with your GoPro account

5. Blober captures your session automatically

#### Step 2: Choose Your Destination

[Section titled “Step 2: Choose Your Destination”](#step-2-choose-your-destination)

Select where you want your footage to go:

* **Local disk** - your SSD, HDD, NAS, or external drive

* **Backblaze B2** - affordable, S3-compatible, free egress

* **AWS S3** - enterprise-grade, global availability

* **Wasabi** - hot storage with no egress fees

* **Cloudflare R2** - zero egress, fast edge delivery

* **Any other Blober-supported provider**

#### Step 3: Configure Path Templates (Optional)

[Section titled “Step 3: Configure Path Templates (Optional)”](#step-3-configure-path-templates-optional)

Use Blober’s metadata-based path templates to auto-organize files as they transfer:

```plaintext

/{camera_model}/{capture_date}/{filename}

```

This turns a flat GoPro dump into a clean archive:

```plaintext

/HERO13 Black/2026-01-23/GX015742.MP4

/HERO13 Black/2026-01-23/gorp0001.JPG

/HERO12 Black/2025-12-15/GX014521.MP4

```

#### Step 4: Run and Monitor

[Section titled “Step 4: Run and Monitor”](#step-4-run-and-monitor)

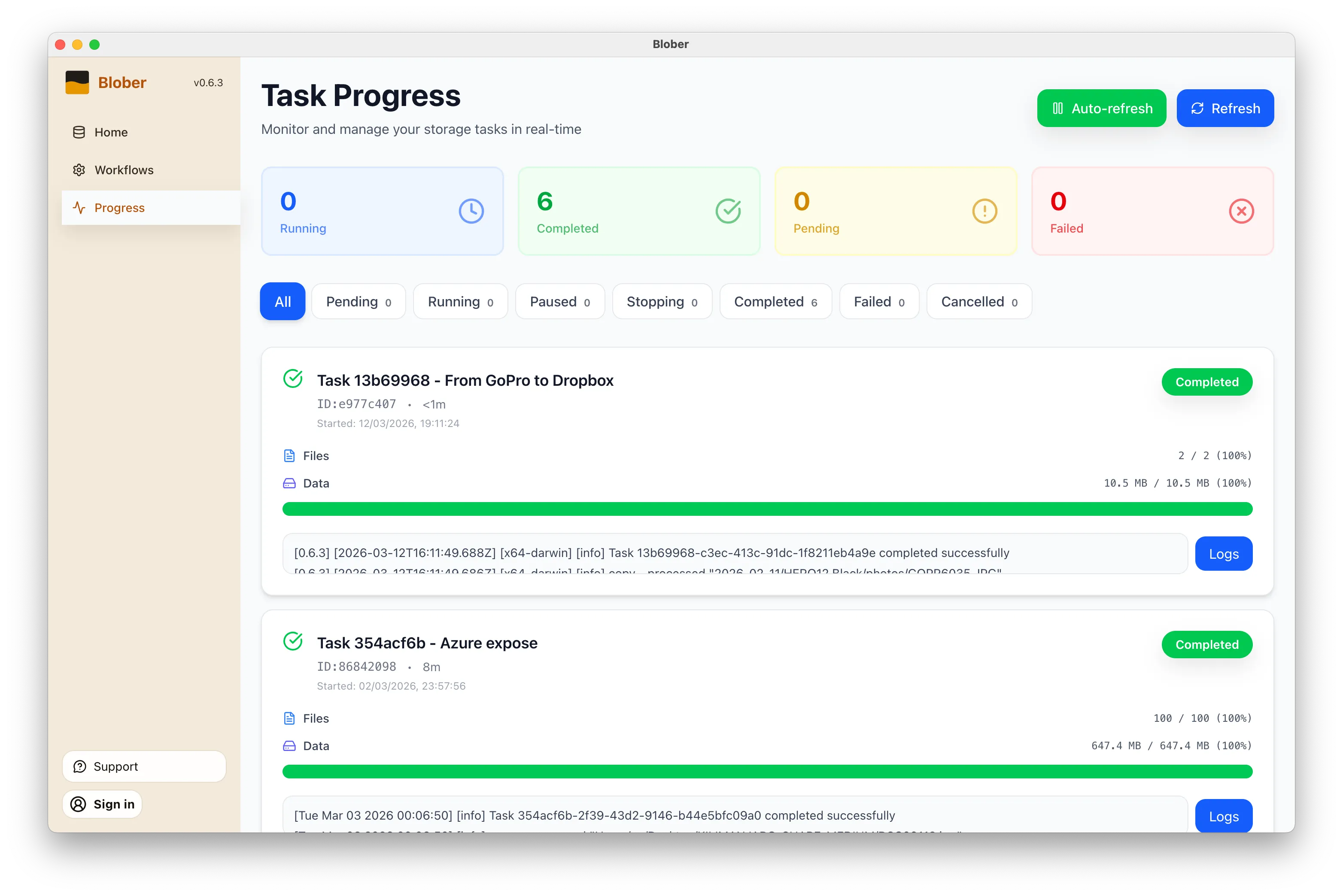

Click **Start** and Blober handles the rest:

* Parallel downloads for maximum throughput

* Real-time progress tracking

* Automatic resume on interruption

* Full task history logged for every file

***

### Supported GoPro Media

[Section titled “Supported GoPro Media”](#supported-gopro-media)

| Type | Extensions |

| ------ | ------------------------------ |

| Videos | `.mp4`, `.mov`, `.avi`, `.mkv` |

| Photos | `.jpg`, `.png`, `.raw`, `.dng` |

Blober downloads the **highest available quality** - no compression, no re-encoding.

***

### Metadata Available

[Section titled “Metadata Available”](#metadata-available)

Each GoPro file includes rich metadata that Blober can use for organization:

| Field | Example |

| ------------ | ------------- |

| Camera model | HERO13 Black |

| Capture date | 2026-01-23 |

| Resolution | 5312 × 2988 |

| File size | 142.5 MB |

| Duration | 0:32 (videos) |

***

### Frequently Asked Questions

[Section titled “Frequently Asked Questions”](#frequently-asked-questions)

**Can I upload to GoPro Cloud with Blober?** Yes. Blober supports uploads to GoPro Cloud (up to 5 TB per file) with multipart upload and progress tracking.

**Does Blober store my GoPro credentials?** No. Blober uses a browser-based login flow. Your session lasts approximately 20 hours, after which Blober prompts you to sign in again. Credentials are never stored or transmitted to any server.

**Can rclone, MultCloud, or Flexify do this?** No. As of February 2026, Blober is the only transfer tool that supports GoPro Cloud. rclone (70+ providers), MultCloud (30+ services), and Flexify (\~25 clouds) do not include GoPro Cloud integration.

**What if my transfer is interrupted?** Blober saves progress and resumes from the last successfully transferred file. No need to re-download everything.

***

### Take Control of Your GoPro Footage

[Section titled “Take Control of Your GoPro Footage”](#take-control-of-your-gopro-footage)

Your footage is irreplaceable - years of adventures, events, and memories sitting in a cloud you can only access through a subscription. [**Blober**](https://blober.io/) gives you a way out: move it all to storage you own and control, in the highest quality, organized exactly how you want.

[Get started with Blober →](https://blober.io/)

# Back Up Cloud Storage Directly to Your NAS

> Transfer files from GoPro Cloud, Dropbox, Google Drive, or S3 straight to your Synology, QNAP, or any network drive — no double-copy, no CLI, no subscription.

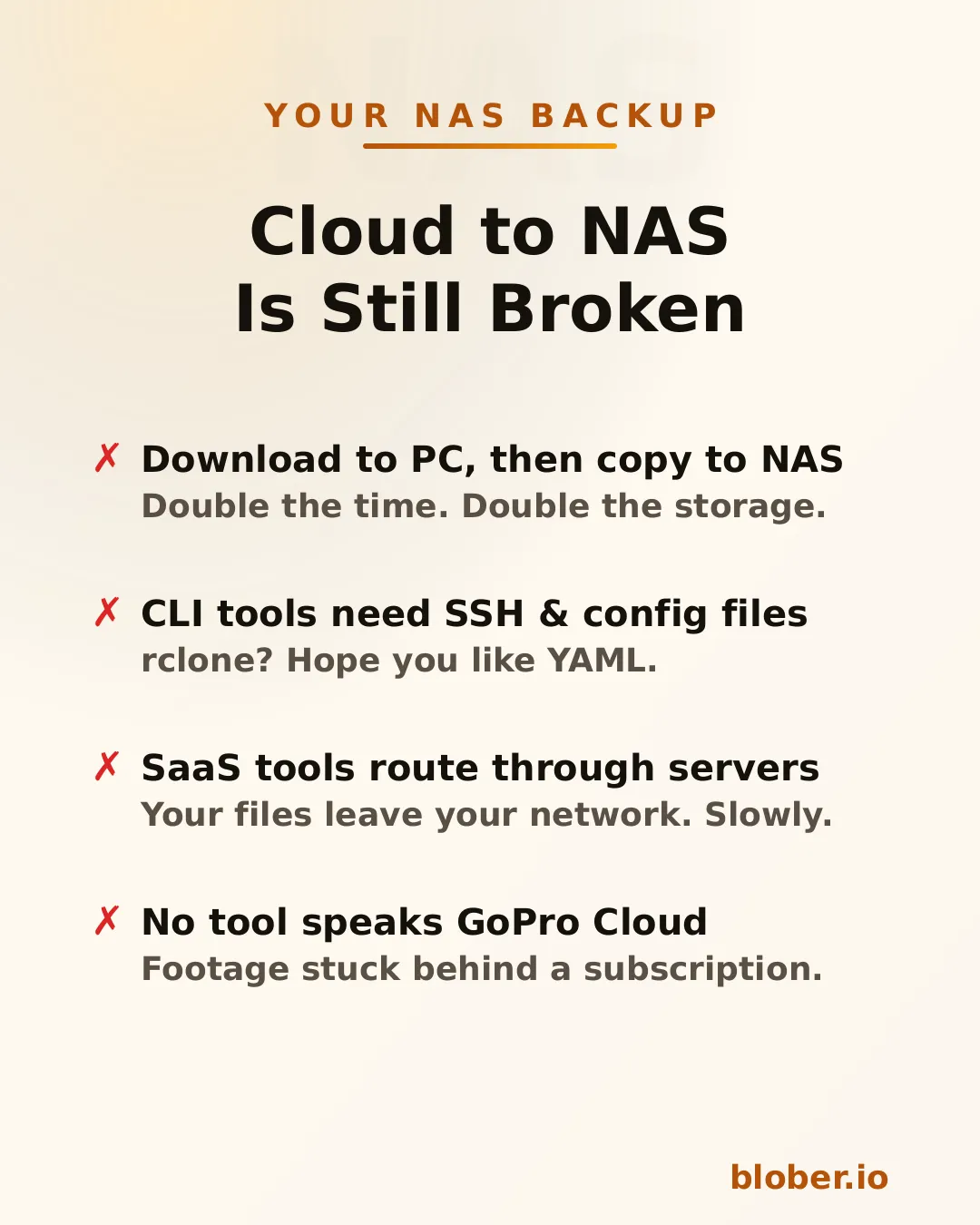

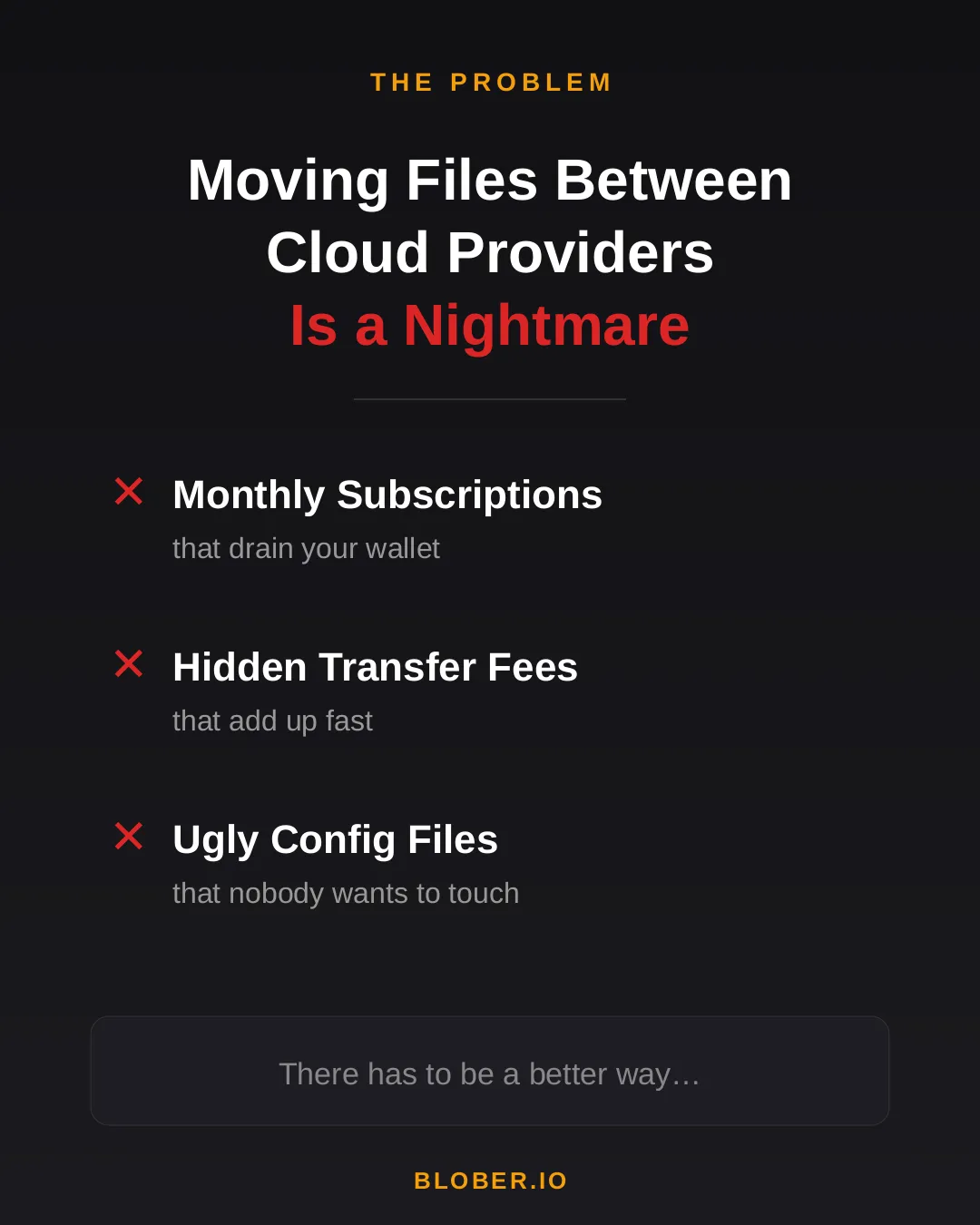

## The Problem

[Section titled “The Problem”](#the-problem)

You have files in the cloud — GoPro footage, Dropbox archives, Google Drive projects, S3 buckets — and you want them on your NAS. Simple enough in theory. In practice, the available options are all some flavor of painful.

**Download then copy** is the default workflow. Download everything from the cloud to your PC, then manually copy it to the NAS. You need enough free space on your PC for the entire dataset, you do every byte twice, and if the NAS connection drops mid-copy you start over.

**CLI tools like rclone** can mount cloud storage or sync directly, but you need to configure remotes, write YAML, manage credentials, and troubleshoot provider-specific flags. It works — eventually. It’s not something most people reach for on a Saturday afternoon.

**SaaS migration services** like MultCloud or Cloudsfer route your files through their servers. Your data leaves your network, passes through a third party, then comes back down to your NAS. It’s slower, it’s a privacy concern, and it costs a monthly subscription — usually with transfer caps.

**GoPro Cloud has no solution at all.** No migration tool supports it. rclone doesn’t. MultCloud doesn’t. You’re stuck batch-downloading 25 files at a time through a web browser, manually.

***

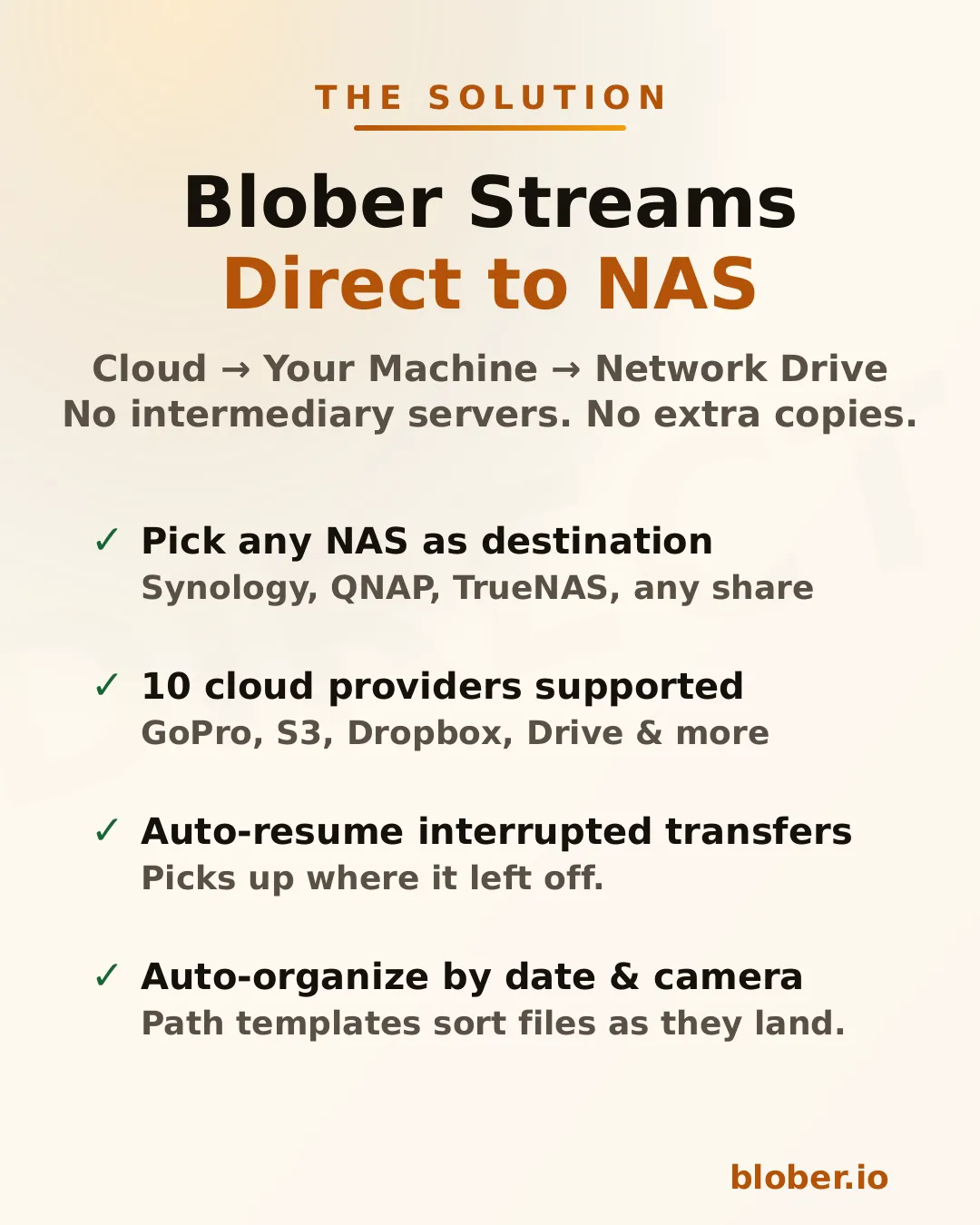

## Blober Streams Directly to Your NAS

[Section titled “Blober Streams Directly to Your NAS”](#blober-streams-directly-to-your-nas)

[**Blober**](https://blober.io/) is a desktop app that connects to 10 cloud providers and transfers files to any local or network destination — including NAS drives.

The architecture is straightforward: Blober runs on your computer, pulls data from the cloud API, and writes it to whatever destination you select in the file picker. If that destination is a mapped network drive (`\\SYNOLOGY\backup` or `/Volumes/NAS/media`), the files go there.

No intermediate server. No extra copy on your local disk. No subscription.

### Supported NAS systems

[Section titled “Supported NAS systems”](#supported-nas-systems)

Blober works with **any NAS that your OS can see as a folder**:

* **Synology DiskStation** — map via SMB/CIFS (\synology\shared) or mount via NFS

* **QNAP** — same: SMB share or NFS mount

* **TrueNAS / FreeNAS** — SMB, NFS, or iSCSI-backed mount points

* **Unraid** — SMB shares show up as network folders

* **Western Digital My Cloud** — maps as a standard network drive

* **Any SMB/NFS share** — if your OS can browse it, Blober can write to it

There’s nothing NAS-specific to configure in Blober. You just pick the folder.

***

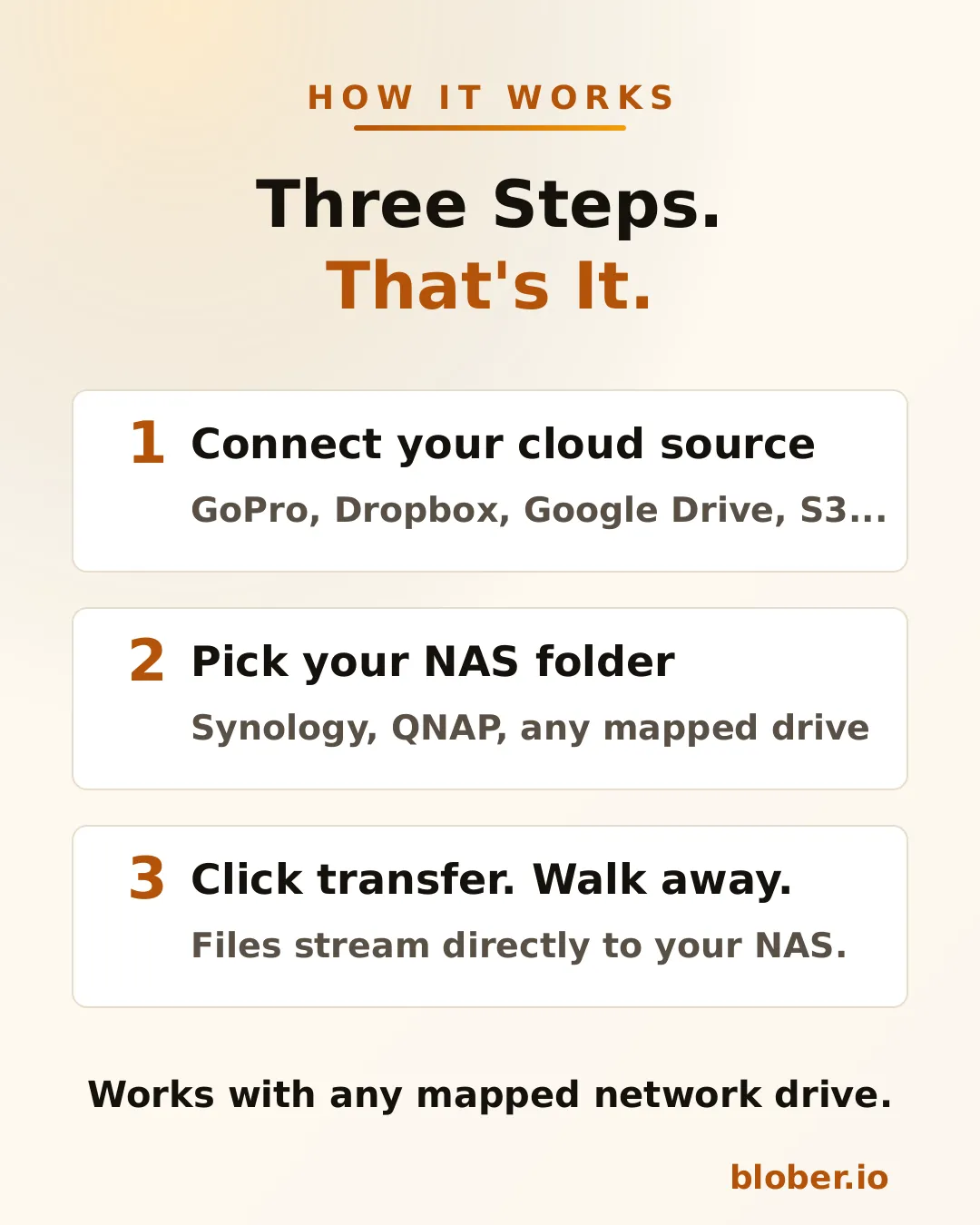

## How It Works

[Section titled “How It Works”](#how-it-works)

1. **Connect your cloud source.** Blober supports GoPro Cloud, Dropbox, Google Drive, AWS S3, Azure Blob, Backblaze B2, Cloudflare R2, Wasabi, and DigitalOcean Spaces. Authenticate once.

2. **Pick your NAS folder.** The standard OS folder picker shows your mapped network drives. Select the target directory on your NAS.

3. **Transfer.** Blober streams the files and writes them directly to the network path. If your connection drops or the NAS goes to sleep, the transfer resumes from where it stopped.

### Auto-organize with path templates

[Section titled “Auto-organize with path templates”](#auto-organize-with-path-templates)

Blober supports path templates that sort files as they arrive:

```plaintext

{file_created_date}/{camera_model}/{media_type}/{filename}

```

This turns a flat cloud dump into an organized library:

```plaintext

2024-12-15/HERO12 Black/videos/GH010432.MP4

2024-12-15/HERO12 Black/photos/GOPR0900.JPG

2025-01-03/HERO7 Black/videos/GH010904.MP4

```

The template runs before the file is written — files land on your NAS already organized.

***

## Why NAS Users Specifically Benefit

[Section titled “Why NAS Users Specifically Benefit”](#why-nas-users-specifically-benefit)

NAS owners tend to be people who care about data ownership, long-term archival, and not paying recurring fees for storage they already bought. Blober aligns with all three.

**Your files stay on your network.** Unlike SaaS tools that route data through external servers, Blober pulls from the cloud API and writes locally. For NAS users who chose a NAS precisely to keep data under their control, this matters.

**One-time payment.** NAS users already rejected the subscription model when they bought hardware instead of renting cloud storage. Blober follows the same philosophy: pay once, use forever.

**Scale doesn’t matter.** Whether you’re backing up 50 GoPro clips or migrating 10 TB from S3, there are no transfer caps, no per-GB fees, and no throttling.

***

## Common NAS Backup Scenarios

[Section titled “Common NAS Backup Scenarios”](#common-nas-backup-scenarios)

| Scenario | Source | NAS destination |

| --------------------------- | ---------------------- | ----------------------------- |

| GoPro footage archive | GoPro Cloud | `\\NAS\media\gopro\` |

| Photo library consolidation | Google Drive + Dropbox | `\\NAS\photos\` |

| S3 cold storage migration | AWS S3 | `\\NAS\archive\s3-backup\` |

| Shared family photo vault | Dropbox | `\\SYNOLOGY\family-photos\` |

| Video production offload | Backblaze B2 | `\\NAS\projects\raw-footage\` |

Each of these is a single task in Blober. Set source, set destination, transfer.

***

## Who Is This For?

[Section titled “Who Is This For?”](#who-is-this-for)

* **NAS owners** who want cloud backups on hardware they control

* **GoPro users** who need their footage off GoPro Cloud (Blober is the only tool that connects)

* **Photographers and videographers** archiving years of work to local network storage

* **Home lab users** consolidating data from multiple cloud services onto one NAS

* **Small businesses** migrating away from cloud storage subscriptions to on-premise drives

***

## Get Blober

[Section titled “Get Blober”](#get-blober)

One app. Ten cloud providers. Any NAS.

**[Download Blober at blober.io](https://blober.io)**

# Blober vs Flexify

> Predictable pricing, local execution, and full credential ownership for serious cloud transfers

### Overview

[Section titled “Overview”](#overview)

Both [**Blober**](https://blober.io/) and **Flexify.io** solve the same core problem: moving large volumes of data between cloud storage providers. They approach the problem from fundamentally different architectural and economic philosophies.

Flexify.io (founded 2015, Tampa FL) is a managed, cloud-based migration and virtualization platform built for enterprises moving tens or hundreds of terabytes in controlled, one-time projects. [**Blober**](https://blober.io/) is a local-first desktop workflow engine designed for continuous, repeatable transfers - no subscriptions, no per-GB fees, and no third-party servers touching your data.

***

### Architectural Philosophy

[Section titled “Architectural Philosophy”](#architectural-philosophy)

**Flexify.io**

* Cloud-hosted migration engines deployed on Flexify-managed infrastructure

* Data routes through Flexify servers (or, for managed 10 TB+ migrations, direct cloud-to-cloud)

* Usage-based pricing - you pay per GiB transferred

* Emphasis on API virtualization: translates Amazon S3 API to Azure Blob Storage on-the-fly

* Supports \~25 object-storage providers (S3-compatible, Azure, GCS, Alibaba, etc.)

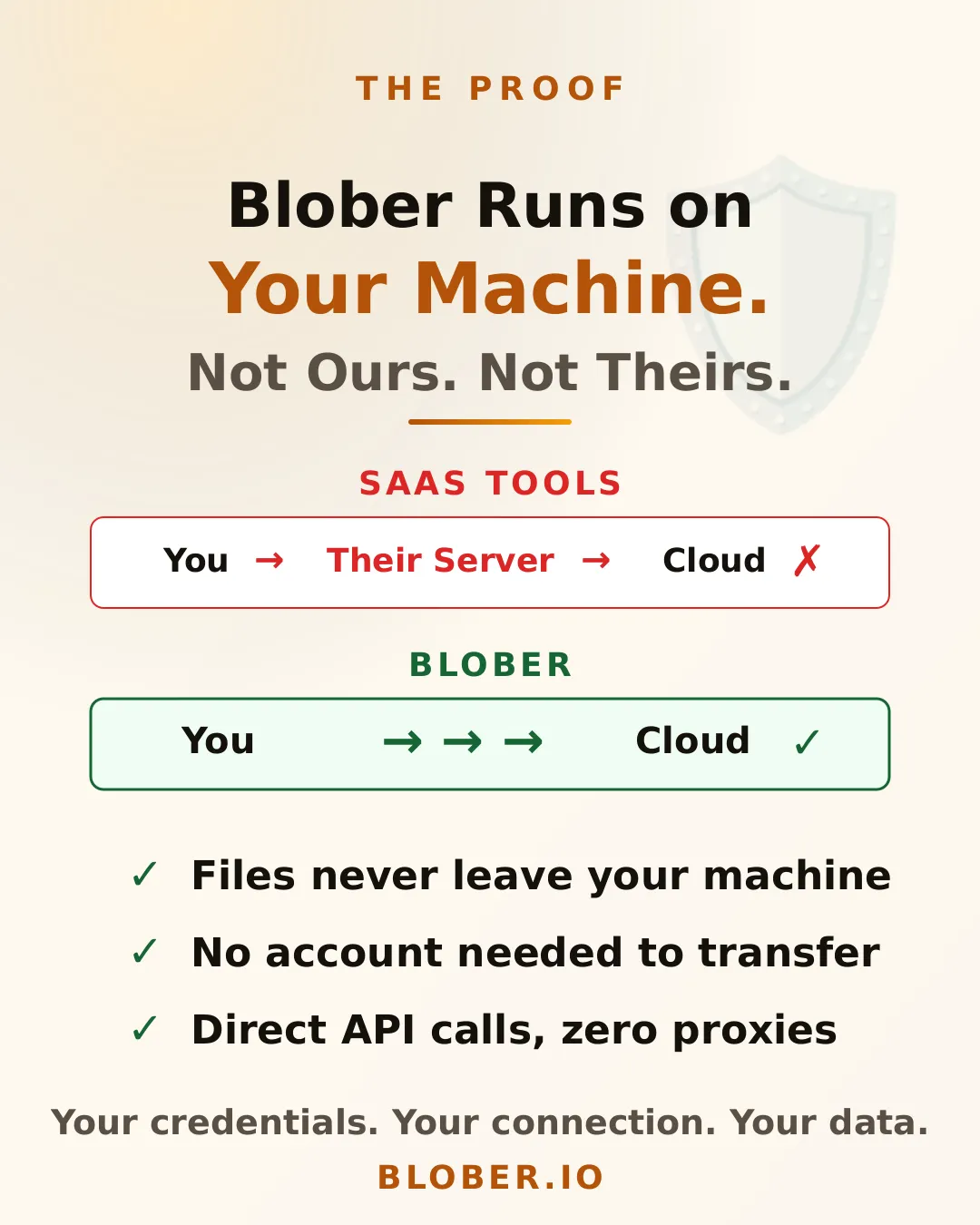

[**Blober**](https://blober.io/)

* Runs entirely on your local machine (Windows, macOS, Linux)

* Transfers go directly between your machine and each storage provider - **no intermediary servers**

* All credentials stored locally and never transmitted to a third party

* Supports unique providers like **GoPro Cloud** that no other migration tool covers

This distinction matters for users who care about cost predictability, credential ownership, data sovereignty, and ongoing workflows rather than one-time migrations.

***

### Pricing Model Comparison

[Section titled “Pricing Model Comparison”](#pricing-model-comparison)

| Aspect | [**Blober**](https://blober.io/) | Flexify.io |

| -------------- | -------------------------------- | ----------------------------------------------------------- |

| Pricing style | ✅ One-time license | Usage-based (per GiB) |

| Current cost | Discounted beta pricing | \~$0.03/GiB Flexify fee + provider egress ($0.05–$0.09/GiB) |

| Subscription | ✅ None | Sign-up required ($20 free credit) |

| Long-term cost | ✅ Fixed forever | Grows with every transfer |

| 1 TB migration | ✅ One-time price | \~$80 – $120+ in fees |

For a single 1 TB migration from AWS S3 to Google Cloud Storage, Flexify’s self-service rate is approximately $0.08–$0.12 per GiB - translating to **$80–$120+** for that one job. With [**Blober**](https://blober.io/), only your provider’s standard egress fees apply; there is no Blober per-GB charge.

***

### Feature Comparison

[Section titled “Feature Comparison”](#feature-comparison)

| Feature | [**Blober**](https://blober.io/) | Flexify.io |

| ------------------------------ | -------------------------------- | ----------------------- |

| Cloud-to-cloud transfer | ✅ Yes | Yes |

| Local filesystem integration | ✅ Yes | Limited |

| GoPro Cloud support | ✅ Yes | ❌ No |

| Metadata-based path templating | ✅ Yes | No |

| Persistent task history | ✅ Yes | Managed dashboard |

| Workflow reuse | ✅ Yes | Limited |

| Resumable workflows | ✅ Yes | Yes |

| API access | No | Yes |

| Virtual S3 endpoint | No | Yes |

| Credential storage | ✅ Local only | Cloud-managed |

| Data path | ✅ Direct | Through Flexify servers |

***

### Data Sovereignty and Privacy

[Section titled “Data Sovereignty and Privacy”](#data-sovereignty-and-privacy)

With Flexify, your storage credentials are stored on their servers and your data may transit through Flexify-managed infrastructure. For regulated industries, sensitive media archives, or personal data - this introduces a third-party dependency and potential compliance exposure.

[**Blober**](https://blober.io/) eliminates this concern entirely:

* **Credentials never leave your machine** - no third-party vault, no OAuth token stored in a SaaS dashboard

* **Data flows directly** between your local machine and each cloud provider

* **No account required** - Blober works offline with a one-time license

* Full control over when, where, and how your data moves

***

### Unique Provider Coverage

[Section titled “Unique Provider Coverage”](#unique-provider-coverage)

[**Blober**](https://blober.io/) is the only migration tool that supports **GoPro Cloud** - allowing GoPro users to back up or transfer their media archives to any supported provider (AWS S3, Backblaze B2, Wasabi, local disk, etc.). Neither Flexify, rclone, nor MultCloud offer GoPro Cloud integration.

This makes [**Blober**](https://blober.io/) the go-to choice for photographers, videographers, and agencies managing GoPro footage across storage tiers.

***

### Maturity and Risk Tradeoff

[Section titled “Maturity and Risk Tradeoff”](#maturity-and-risk-tradeoff)

Flexify.io is a mature enterprise platform (since 2015) with production-scale deployments and petabytes migrated. [**Blober**](https://blober.io/) is newer and currently in beta, with faster iteration and less operational overhead.

[**Blober**](https://blober.io/) offsets its maturity gap with:

* Aggressive beta pricing - lock in your license before prices go up

* Rapid feature development with direct community influence on the roadmap

* No lock-in to ongoing fees - one purchase, unlimited use

* Desktop-native architecture that is inherently simpler and more predictable

***

### When [**Blober**](https://blober.io/) Makes More Sense

[Section titled “When Blober Makes More Sense”](#when-blober-makes-more-sense)

Choose [**Blober**](https://blober.io/) if you:

* Transfer data regularly, not just once

* Want full control over credentials and data flow

* Need GoPro Cloud support (only Blober has it)

* Prefer a native desktop UI over enterprise SaaS dashboards

* Want predictable lifetime pricing with no per-GB surprises

* Care about data sovereignty - no third-party servers touching your files

# Blober vs Flexify vs rclone

> Three tools, three philosophies, one decision. Compare Blober.io, Flexify.io, and rclone to find the best fit for your cloud data transfer needs in 2026.

### Overview

[Section titled “Overview”](#overview)

Three tools dominate cloud data transfer in 2026 - each solving the problem from a completely different angle. Here’s how they compare.

***

### High-Level Comparison Table

[Section titled “High-Level Comparison Table”](#high-level-comparison-table)

| Dimension | [**Blober**](https://blober.io/) | Flexify.io | rclone |

| ----------------------- | -------------------------------- | ---------------------------------------- | --------------------- |

| Architecture | ✅ Local-first desktop | Managed SaaS | CLI utility |

| Pricing | ✅ One-time license | Usage-based (\~$0.03–$0.04/GiB + egress) | Free |

| Ease of use | ✅ High (native GUI) | Medium (web dashboard) | Low (terminal only) |

| Provider count | 9+ and growing | \~25 (object storage) | 70+ |

| GoPro Cloud support | ✅ **Yes (exclusive)** | ❌ No | ❌ No |

| Credential control | ✅ Local only | Cloud-managed | Local config file |

| Data path | ✅ Direct (no middleman) | Through Flexify servers | Direct (local) |

| Workflow persistence | ✅ Built-in | Dashboard-based | None (manual scripts) |

| Task history & resume | ✅ Built-in | Dashboard-based | Logs only |

| Metadata path templates | ✅ Yes | No | Manual scripting |

| Automation | Limited | High | Very high |

| API virtualization | No | Yes (S3-to-Azure gateway) | No |

| Enterprise scale | High | High | High |

| Open source | No | No | Yes |

| Best for | Agencies, creators, engineers | Enterprises (petabyte migrations) | Engineers, sysadmins |

***

### Pricing at a Glance

[Section titled “Pricing at a Glance”](#pricing-at-a-glance)

| Scenario | [**Blober**](https://blober.io/) | Flexify.io | rclone |

| ----------------- | -------------------------------- | ------------------- | ------ |

| 100 GB migration | ✅ One-time | \~$8 – $12 | Free |

| 1 TB migration | ✅ One-time | \~$80 – $120+ | Free |

| 10 TB migration | ✅ One-time | \~$800 – $1,200+ | Free |

| Recurring monthly | ✅ $0 | Compounds every run | Free |

Flexify charges per GiB transferred plus cloud provider egress fees. Costs add up fast for recurring workflows. rclone is free but demands engineering time. [**Blober**](https://blober.io/) sits in the sweet spot: pay once, transfer forever.

***

### Data Sovereignty

[Section titled “Data Sovereignty”](#data-sovereignty)

| Concern | [**Blober**](https://blober.io/) | Flexify.io | rclone |

| ----------------------- | -------------------------------- | ------------------- | ----------------- |

| Credentials stored | ✅ Local only | Flexify servers | Local config file |

| Data transits 3rd party | ✅ No | Yes (Flexify infra) | No |

| Account required | ✅ No | Yes | No |

| Offline operation | ✅ Yes | No | Yes |

For regulated industries, sensitive media archives, or personal data - avoiding third-party intermediaries is not a preference, it is a requirement. Both [**Blober**](https://blober.io/) and rclone keep your data path clean. Flexify introduces a managed middleman.

***

### The GoPro Factor

[Section titled “The GoPro Factor”](#the-gopro-factor)

[**Blober**](https://blober.io/) is the **only** transfer tool that supports **GoPro Cloud**. Neither Flexify nor rclone can access GoPro’s storage. If you manage GoPro footage - whether as a creator, agency, or production house - Blober is the only option for migrating that media to professional storage like Backblaze B2, AWS S3, or your local NAS.

***

### Summary

[Section titled “Summary”](#summary)

* **rclone** is the most powerful tool if you are deeply technical, automation-driven, and comfortable with terminal workflows. It is free and supports 70+ providers.

* **Flexify.io** is ideal for enterprises running massive one-time migrations under strict SLAs, especially when virtual S3 endpoints or managed infrastructure are required. Budget accordingly - costs scale with data volume.

* [**Blober**](https://blober.io/) fills the gap between them: professional-grade transfers with a native desktop GUI, local credential control, visual workflows, predictable one-time pricing, and exclusive GoPro Cloud support.

[**Blober**](https://blober.io/)’s beta pricing locks in a lifetime license at a fraction of the cost competitors charge for a single large migration. For users who value simplicity, sovereignty, and long-term savings - Blober is the clear choice.

# Blober vs MultCloud

> One-time pricing and local control versus subscription-based cloud transfer with data caps

### Overview

[Section titled “Overview”](#overview)

[MultCloud](https://www.multcloud.com/) (founded 2012, Hong Kong) is a web-based platform for transferring, syncing, and managing files across 30+ cloud services. It is subscription-based and routes all data through MultCloud’s servers.

[**Blober**](https://blober.io/) is a local-first desktop application that transfers data directly between your machine and cloud providers - no middleman, no subscription, no data caps.

Both tools target non-technical users who want cloud-to-cloud transfers without writing scripts. The difference lies in architecture, pricing, and trust.

***

### Architectural Difference

[Section titled “Architectural Difference”](#architectural-difference)

**MultCloud**

* Web-based SaaS - runs entirely in your browser

* All data routes through MultCloud’s servers in Hong Kong

* Requires an account and OAuth access to your cloud accounts

* Subscription required for meaningful use (free tier: 5 GB/month)

[**Blober**](https://blober.io/)

* Native desktop application (Windows, macOS, Linux)

* Data flows directly between your machine and each cloud provider

* **No intermediary servers** - your files never touch a third party

* Credentials stored locally, never transmitted

***

### Pricing Model Comparison

[Section titled “Pricing Model Comparison”](#pricing-model-comparison)

| Aspect | [**Blober**](https://blober.io/) | MultCloud |

| ------------------- | -------------------------------- | -------------------------------------- |

| Pricing style | ✅ One-time license | Subscription (annual) |

| Free tier | N/A (beta pricing) | 5 GB/month, 2 transfer threads |

| Mid-tier plan | - | **$59.99/year** - 1,200 GB/year |

| Top-tier plan | - | **$99.98/year** - 2,400 GB/year |

| Transfer threads | Automatic parallelism | Free: 2 threads, Paid: 10 threads |

| Data cap | ✅ **None** | Capped per plan (5 GB – 2,400 GB/year) |

| Long-term cost (3y) | ✅ One-time purchase | $180 – $300+ |

MultCloud’s data traffic limits are a hard ceiling. Once you exhaust your annual quota, transfers stop until you renew. [**Blober**](https://blober.io/) has **no transfer caps** - move as much data as your bandwidth allows.

***

### Feature Comparison

[Section titled “Feature Comparison”](#feature-comparison)

| Feature | [**Blober**](https://blober.io/) | MultCloud |

| ---------------------------- | -------------------------------- | ------------------------- |

| Cloud-to-cloud transfer | ✅ Yes | Yes |

| Local filesystem integration | ✅ Yes | No (web-only) |

| GoPro Cloud support | ✅ **Yes (exclusive)** | ❌ No |

| Storage-optimized transfers | ✅ Yes | Generic |

| Workflow persistence | ✅ Yes | Scheduled tasks |

| Task history and logs | ✅ Yes | Basic dashboard |

| Metadata path templates | ✅ Yes | No |

| Resumable transfers | ✅ Yes | Limited |

| Sync (two-way) | Planned | Yes |

| Email-to-cloud (PDF) | No | Yes |

| Credential storage | ✅ Local only | MultCloud servers (OAuth) |

| Data path | ✅ Direct | Through MultCloud servers |

***

### Data Sovereignty and Privacy

[Section titled “Data Sovereignty and Privacy”](#data-sovereignty-and-privacy)

This is where the difference is starkest. MultCloud requires OAuth access to your cloud accounts and routes all transferred data through its own servers. Their privacy page states data is “temporarily cached” during operations.

[**Blober**](https://blober.io/) takes the opposite approach:

* **Credentials never leave your machine** - no OAuth tokens stored on third-party servers

* **Data flows directly** between your local machine and each cloud provider

* **No account needed** - Blober works with a license key, offline

* **No data caching** - nothing is stored, buffered, or logged on remote servers

For users transferring personal photos, sensitive business documents, or media archives - the question is simple: do you want your data flowing through servers in Hong Kong, or directly from your machine to your cloud provider?

***

### GoPro Cloud: Only on Blober

[Section titled “GoPro Cloud: Only on Blober”](#gopro-cloud-only-on-blober)

MultCloud supports 30+ consumer cloud services (Google Drive, Dropbox, OneDrive, etc.) but does **not** support GoPro Cloud. If you need to move GoPro footage to professional storage like Backblaze B2, AWS S3, or Wasabi, MultCloud cannot help.

[**Blober**](https://blober.io/) is the only transfer tool with native GoPro Cloud integration - making it essential for photographers, videographers, and agencies managing action camera footage.

***

### When [**Blober**](https://blober.io/) is the Sharper Tool

[Section titled “When Blober is the Sharper Tool”](#when-blober-is-the-sharper-tool)

Choose [**Blober**](https://blober.io/) if you:

* Need to move large volumes of data without annual caps

* Want predictable, one-time pricing - not $60–$100/year forever

* Prefer local execution over web-based SaaS

* Require data sovereignty - no files routing through third-party servers

* Need GoPro Cloud support (only Blober has it)

* Value detailed task history, resumable workflows, and metadata-based organization

* Care about credential security - no OAuth tokens stored in the cloud

# Blober vs rclone

> rclone's raw power, without the scripts, flags, or fragile workflows

### Overview

[Section titled “Overview”](#overview)

rclone is the industry-standard CLI tool for cloud storage automation among developers and sysadmins. It is extremely powerful, supports over 70 storage providers, and is completely free and open-source. Its tradeoff is complexity - every job requires flags, config files, and terminal expertise.

[**Blober**](https://blober.io/) is built for users who want rclone-level capability without managing flags, scripts, or terminal state. It replaces stateless CLI execution with persistent, visual workflows that anyone can set up and repeat.

***

### Interface and Usability

[Section titled “Interface and Usability”](#interface-and-usability)

**rclone**

* Command-line only (experimental web GUI exists, but limited)

* Configuration files and flags - every job requires manual setup

* Excellent for scripting and cron-based automation

* Steep learning curve for non-technical users

* No built-in workflow persistence - you must manage your own scripts

[**Blober**](https://blober.io/)

* Native desktop GUI (Windows, macOS, Linux)

* Visual setup of sources, destinations, and filters

* Saved workflows with one-click execution

* Built-in task history with resumable state

* Designed for repeatability and clarity - no terminal required

***

### Feature Comparison

[Section titled “Feature Comparison”](#feature-comparison)

| Feature | [**Blober**](https://blober.io/) | rclone |

| ----------------------- | -------------------------------- | ------------------- |

| Interface | ✅ GUI | CLI |

| Provider count | Growing (9+) | 70+ |

| GoPro Cloud support | ✅ Yes | ❌ No |

| Local filesystem | ✅ Yes | Yes |

| Cloud-to-cloud | ✅ Yes | Yes |

| Workflow persistence | ✅ Yes | No (manual scripts) |

| Metadata path templates | ✅ Yes | Manual scripting |

| Task history & resume | ✅ Yes | Logs only |

| Encryption | Planned | Built-in |

| Automation | Limited | Extensive |

| Open source | No | Yes |

| Data path | ✅ Direct | Direct (local) |

***

### GoPro Cloud: A Blober Exclusive

[Section titled “GoPro Cloud: A Blober Exclusive”](#gopro-cloud-a-blober-exclusive)

rclone supports over 70 providers - but **GoPro Cloud is not one of them**. If you shoot with GoPro cameras and want to move your media from GoPro’s cloud to Backblaze B2, AWS S3, Wasabi, or your local NAS, rclone simply cannot help.

[**Blober**](https://blober.io/) is the only transfer tool with native GoPro Cloud integration, making it the obvious choice for photographers, videographers, action sports creators, and agencies managing GoPro media libraries.

***

### Workflow Example

[Section titled “Workflow Example”](#workflow-example)

**rclone** requires upfront configuration, careful flag selection, and scripting discipline to safely repeat jobs:

```bash

rclone copy remote:bucket/path dest:bucket/path \

--transfers 4 --checkers 8 --retries 3 \

--filter-from filters.txt --log-file transfer.log

```

Forget a flag? Change a path? The job silently behaves differently. There is no built-in history of what ran, when, or whether it succeeded.

[**Blober**](https://blober.io/) stores each workflow as a durable configuration with immutable execution history. If a transfer is interrupted, Blober resumes based on stored state rather than re-running a stateless command.

This difference becomes critical for:

* Long-running transfers over unreliable connections

* Media archives with thousands of files

* Users who run transfers infrequently and forget the exact flags

* Teams where multiple people need to trigger the same workflow

***

### Data Sovereignty

[Section titled “Data Sovereignty”](#data-sovereignty)

Both rclone and [**Blober**](https://blober.io/) are local-first tools - your credentials stay on your machine. This is a shared advantage over SaaS competitors like Flexify and MultCloud where credentials and potentially data flow through third-party servers.

Where [**Blober**](https://blober.io/) adds value over rclone:

* **No terminal exposure** - credentials are managed in a secured desktop app, not plaintext config files

* **Encrypted credential storage** - not a `~/.config/rclone/rclone.conf` file on disk

* **Visual audit trail** - every transfer logged with timestamps, file counts, and status

***

### Cost and Support

[Section titled “Cost and Support”](#cost-and-support)

| Aspect | [**Blober**](https://blober.io/) | rclone |

| ----------- | -------------------------------- | -------------------- |

| Cost | One-time license | Free |

| Support | Product support | Community forums |

| Updates | Included with license | Community-driven |

| Target user | Creators, agencies, engineers | Engineers, sysadmins |

rclone being free is a genuine advantage. [**Blober**](https://blober.io/) earns its price by saving time, reducing errors, and opening cloud transfers to users who would never touch a terminal.

***

### When [**Blober**](https://blober.io/) Makes More Sense

[Section titled “When Blober Makes More Sense”](#when-blober-makes-more-sense)

Choose [**Blober**](https://blober.io/) if you:

* Prefer visual tools over terminal commands

* Want repeatable workflows without writing scripts

* Need GoPro Cloud support (only Blober has it)

* Need clarity, task history, and one-click resumption

* Transfer data occasionally but need it to work reliably every time

* Value convenience and productivity over maximum flexibility

* Want credentials stored securely - not in a plaintext config file

# Data Holders: How Blober Fits Your Workflow

> Managing terabytes across multiple clouds is the norm for data holders. Learn how Blober centralizes, migrates, and backs up your data without per-GB fees.

### Who Are Data Holders?

[Section titled “Who Are Data Holders?”](#who-are-data-holders)

Data holders are individuals and organizations that accumulate, manage, and preserve large volumes of digital files as a core part of their work. They aren’t just storing files — they’re responsible for keeping data accessible, organized, and safe across years and even decades.

Data holders include:

* **Photographers and videographers** with terabytes of RAW footage and project archives

* **Researchers and academics** maintaining datasets, papers, and experimental outputs

* **Small businesses** managing client records, invoices, contracts, and media assets

* **IT administrators** responsible for infrastructure backups and compliance archives

* **Content creators** with libraries of video, audio, and design files across platforms

* **Legal and medical professionals** bound by retention requirements for sensitive records

* **Personal archivists** preserving family photos, home videos, and documents

What unites them is a common problem: **data grows, scatters, and becomes harder to manage over time.**

***

### The Data Holder’s Problem

[Section titled “The Data Holder’s Problem”](#the-data-holders-problem)

Most data holders didn’t plan to end up with files in five different places. It happens organically:

1. **Files start local** — on a laptop, NAS, or external drive

2. **Cloud adoption fragments storage** — Google Drive for sharing, Dropbox for syncing, an S3 bucket for backups

3. **Platform lock-in creeps in** — GoPro Cloud holds your footage, iCloud holds your photos, OneDrive holds your documents

4. **Manual management breaks down** — folder naming conventions drift, backups become inconsistent, some files have three copies while others have none

The result is a **scattered, fragile data footprint** where no single tool gives you visibility across all your storage.

| Symptom | Root Cause |

| -------------------------------------------- | ----------------------------------------------------------- |

| ”I know I have that file somewhere” | Files spread across 3–5 providers with no unified view |

| ”My backup is months out of date” | Manual backup processes that require constant attention |

| ”I’m paying for storage I barely use” | Redundant copies in expensive tiers that should be archived |

| ”I can’t move my data without paying egress” | Provider lock-in via egress fees and proprietary APIs |

| ”Organizing everything would take weeks” | Flat folder structures with no metadata-driven automation |

***

### How Blober Solves This

[Section titled “How Blober Solves This”](#how-blober-solves-this)

[**Blober**](https://blober.io/) is a desktop application purpose-built for data holders who need to move, organize, and back up files across cloud providers and local storage — without recurring fees.

#### 1. One Interface for All Your Storage

[Section titled “1. One Interface for All Your Storage”](#1-one-interface-for-all-your-storage)

Blober connects to the storage providers data holders actually use:

| Provider | Typical Use Case |

| -------------------- | --------------------------------------------- |

| AWS S3 | Production infrastructure, enterprise backups |

| Backblaze B2 | Affordable long-term archive |

| Wasabi | Hot storage with no egress fees |

| Cloudflare R2 | CDN-adjacent delivery, zero egress |

| Google Cloud Storage | Workspace-integrated projects |

| Azure Blob Storage | Enterprise and compliance workloads |

| DigitalOcean Spaces | Dev team object storage |

| GoPro Cloud | Action camera footage (Blober exclusive) |

| Dropbox | File sharing and synchronization |

| Local / NAS | On-premise primary storage |

No other single tool covers this range — especially GoPro Cloud, which [**Blober**](https://blober.io/) is the only application to support.

#### 2. Direct Cloud-to-Cloud Transfers

[Section titled “2. Direct Cloud-to-Cloud Transfers”](#2-direct-cloud-to-cloud-transfers)

Instead of downloading files to your machine and re-uploading them, Blober transfers data directly between providers. This matters for data holders because:

* **Saves time** — a 2 TB migration doesn’t bottleneck on your home internet

* **Saves bandwidth** — your ISP data cap stays intact

* **Reduces failure points** — no half-downloaded files sitting on your local disk

#### 3. Metadata-Driven Organization

[Section titled “3. Metadata-Driven Organization”](#3-metadata-driven-organization)

Data holders accumulate files over years. Manually sorting them into folders is unsustainable. Blober supports path templates that use file metadata to auto-organize during transfer:

```plaintext

/{year}/{month}/{camera_model}/{filename}

```

A flat dump of 50,000 files becomes a clean archive:

```plaintext

/2025/06/HERO13 Black/GX015742.MP4

/2025/06/Canon EOS R5/IMG_4521.CR3

/2026/01/iPhone 15 Pro/IMG_0032.HEIC

```

This works for any transfer — cloud-to-cloud, cloud-to-local, or local-to-cloud.

#### 4. Scheduled and Resumable Transfers

[Section titled “4. Scheduled and Resumable Transfers”](#4-scheduled-and-resumable-transfers)

Backup workflows for data holders need to be reliable, not heroic. Blober supports:

* **Resumable transfers** — if your connection drops or your machine restarts, pick up where you left off

* **Incremental syncs** — only transfer files that are new or changed since the last run

* **Large-file handling** — multi-part uploads for files in the tens of gigabytes

No babysitting required. Set up a transfer, let it run, and come back to a completed job.

#### 5. One-Time Pricing

[Section titled “5. One-Time Pricing”](#5-one-time-pricing)

Most cloud migration tools charge per-GB or require annual subscriptions with data caps. For data holders who move terabytes regularly, those costs compound:

| Tool | Pricing Model | Cost for 10 TB/year |

| ---------- | -------------------------- | --------------------------------------- |

| Flexify.io | \~$0.03/GiB per migration | **\~$300+** (plus egress) |

| MultCloud | $99.98/year for 2.4 TB cap | **\~$400+** (need multiple renewals) |

| rclone | Free but manual | **$0** (but hours of CLI configuration) |

| **Blober** | **One-time purchase** | **One price, unlimited transfers** |

You buy [**Blober**](https://blober.io/) once. Transfer 1 TB or 100 TB — the price doesn’t change.

***

### Real-World Workflows

[Section titled “Real-World Workflows”](#real-world-workflows)

#### The Freelance Videographer

[Section titled “The Freelance Videographer”](#the-freelance-videographer)

**Setup:** 8 TB of footage across GoPro Cloud, a local NAS, and Google Drive. Delivers finals via Dropbox.

**With Blober:**

* Connects GoPro Cloud and pulls all footage to Backblaze B2 as a cold archive

* Moves finished projects from local NAS to Cloudflare R2 for client delivery

* Uses path templates to organize by project date and camera model

* Runs periodic syncs from Google Drive to B2 to keep a second backup

**Result:** One tool replaces four manual processes. Total cost: one Blober license.

#### The Small Business IT Admin

[Section titled “The Small Business IT Admin”](#the-small-business-it-admin)

**Setup:** 500 GB of compliance documents in Azure Blob Storage. Daily operational files in Google Workspace. Regulatory requirement for off-site backup.

**With Blober:**

* Transfers compliance archive from Azure to Backblaze B2 as a secondary backup

* Syncs critical Google Drive folders to a local NAS nightly

* Uses Blober’s incremental sync so only changed files move each day

**Result:** Meets audit requirements for geographic redundancy without provisioning a second enterprise cloud account.

#### The Research Lab

[Section titled “The Research Lab”](#the-research-lab)

**Setup:** 12 TB of experimental datasets in AWS S3. New data generated weekly. Grants require data preservation for 10 years.

**With Blober:**

* Migrates completed datasets from S3 Standard to Backblaze B2 (80% storage cost reduction)

* Keeps active datasets in S3 for compute-adjacent access

* Uses metadata templates to organize by experiment ID and date

* Resumable transfers handle multi-GB dataset files without corruption

**Result:** Storage costs drop dramatically while preservation requirements are met.

***

### Why Not Just Use rclone?

[Section titled “Why Not Just Use rclone?”](#why-not-just-use-rclone)

rclone is a powerful open-source CLI tool, and many data holders start there. But it has real limitations for ongoing data management:

| Capability | rclone | Blober |

| ---------------------------- | ------------------------- | ----------------------- |

| GUI for browsing files | No (CLI only) | Yes |

| GoPro Cloud support | No | Yes (exclusive) |

| Dropbox support | Yes | Yes |

| Visual transfer progress | Limited | Full progress dashboard |

| Resumable multi-part uploads | Partial | Built-in |

| Path template organization | Manual scripting | Visual template builder |

| Error handling and retry | Config flags | Automatic |

| Setup time | Hours (config per remote) | Minutes (OAuth flows) |

rclone is great for scripted, automated pipelines. [**Blober**](https://blober.io/) is built for data holders who want reliable transfers without writing shell scripts.

***

### Getting Started as a Data Holder

[Section titled “Getting Started as a Data Holder”](#getting-started-as-a-data-holder)

1. **Audit your storage** — list every provider and local device where you keep files

2. **Identify your archive tier** — choose an affordable destination like Backblaze B2 or Wasabi for long-term storage

3. **Connect everything in Blober** — add each provider via OAuth or API key

4. **Set up your first migration** — pick a source, pick a destination, configure a path template

5. **Let Blober handle the rest** — resumable transfers, incremental syncs, and metadata organization do the heavy lifting

***

### Your Data, Your Infrastructure

[Section titled “Your Data, Your Infrastructure”](#your-data-your-infrastructure)

Data holders shouldn’t need a subscription to manage their own files. [**Blober**](https://blober.io/) runs locally on your machine — your credentials never pass through third-party servers, your transfer bandwidth isn’t metered, and your workflow isn’t gated by monthly caps.

One license. Unlimited providers. Unlimited data.

**[Get Blober](https://blober.io/)** and take control of your data workflow.

# Data Sovereignty: Why Your Cloud Transfer Tool Matters

> Where your credentials live, where your data flows, and why local-first architecture is a trust advantage - not just a technical detail.

### Your Transfer Tool Is a Trust Decision

[Section titled “Your Transfer Tool Is a Trust Decision”](#your-transfer-tool-is-a-trust-decision)

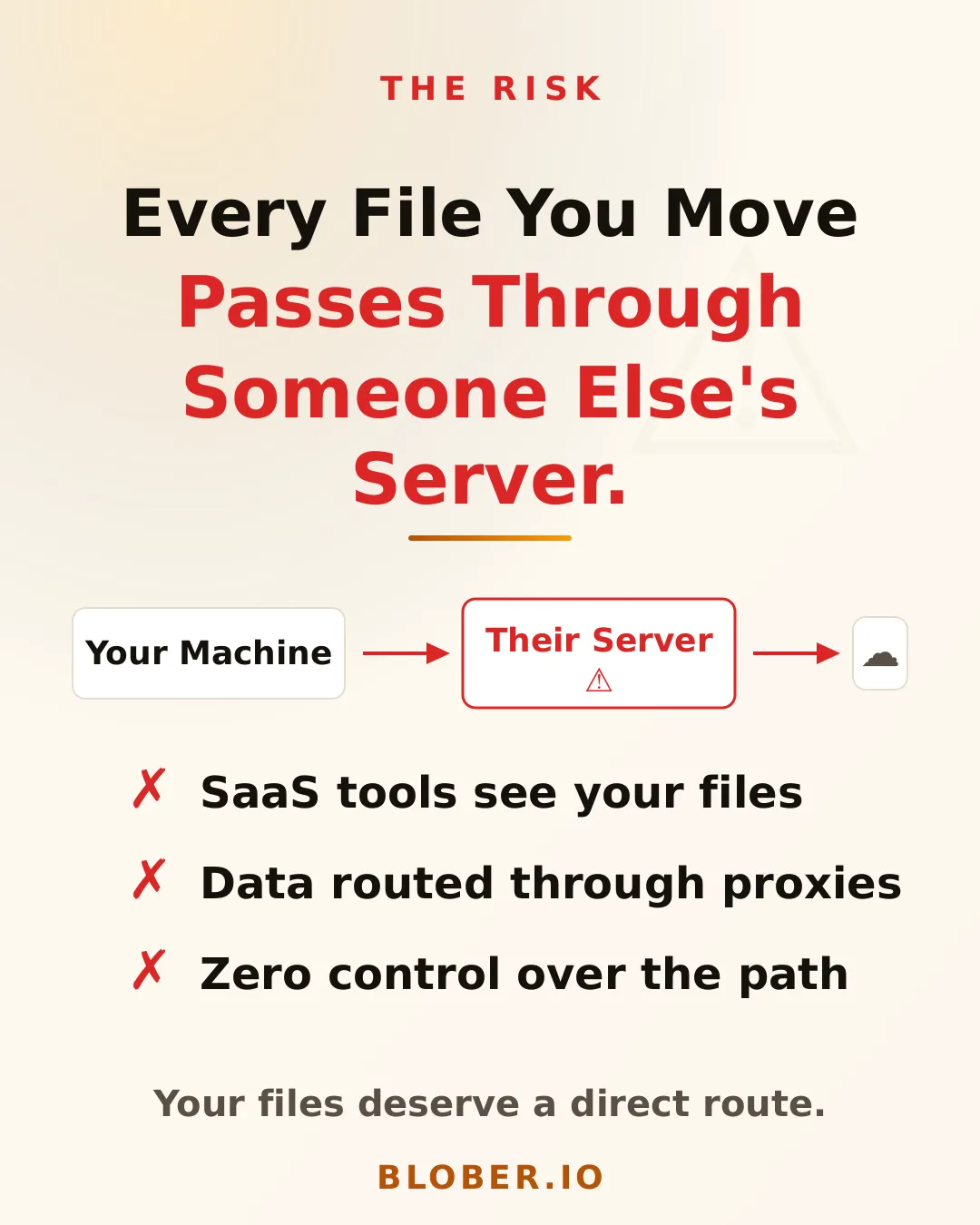

When you move data between cloud providers, your transfer tool has access to everything: your storage credentials, your file contents, your metadata. The architecture of that tool - where it runs, where credentials are stored, where data flows - determines whether you maintain control or hand it to a third party.

Most people evaluate migration tools on speed and features. Few ask the harder question: **who else can see my data while it’s in transit?**

***

### The Three Architectures

[Section titled “The Three Architectures”](#the-three-architectures)

#### 1. SaaS (Cloud-Hosted)

[Section titled “1. SaaS (Cloud-Hosted)”](#1-saas-cloud-hosted)

Tools like **Flexify.io** and **MultCloud** run on their own servers. Your credentials are stored in their infrastructure. Your data routes through their systems during transfer.

| Concern | Flexify.io | MultCloud |

| -------------------- | ------------------------------ | ----------------------------- |

| Credential storage | Flexify servers | MultCloud servers (Hong Kong) |

| Data path | Through Flexify infrastructure | Through MultCloud servers |

| Account required | Yes | Yes |

| OAuth token storage | Server-side | Server-side |

| Offline operation | No | No |

| Privacy policy scope | US (Florida) | Hong Kong |

This doesn’t mean these services are malicious. But it means:

* A **third party stores your cloud credentials** - API keys, OAuth tokens, or access grants

* Your **data transits infrastructure you don’t control** - introducing a man-in-the-middle by design

* You’re subject to **their privacy policy and jurisdiction** - which may change without notice

* A **breach of their systems exposes your credentials** and potentially your data

For personal photos, this might feel acceptable. For business data, media archives, legal documents, or HIPAA/GDPR-adjacent workloads - it’s a serious risk.

#### 2. CLI (Local, But Exposed)

[Section titled “2. CLI (Local, But Exposed)”](#2-cli-local-but-exposed)

**rclone** runs locally on your machine. Your data goes directly to and from each cloud provider. This is a genuine trust advantage over SaaS tools.

However, rclone stores credentials in a **plaintext configuration file** (`~/.config/rclone/rclone.conf`). Anyone with access to your filesystem - malware, another user, a compromised backup - can read your cloud credentials directly.

rclone does offer an encryption option for the config file, but it’s opt-in and requires manual setup. Most users leave it in plaintext.

#### 3. Desktop App (Local + Secured)

[Section titled “3. Desktop App (Local + Secured)”](#3-desktop-app-local--secured)

[**Blober**](https://blober.io/) runs entirely on your machine with encrypted credential storage. Your data flows directly between your machine and each cloud provider. No intermediary.

| Concern | [**Blober**](https://blober.io/) |

| ------------------- | -------------------------------- |

| Credential storage | ✅ Local, encrypted |

| Data path | ✅ Direct (no middleman) |

| Account required | ✅ No (license key only) |

| OAuth token storage | ✅ Local only |

| Offline operation | ✅ Yes |

| Jurisdiction | ✅ Your machine, your rules |

***

### Why This Matters

[Section titled “Why This Matters”](#why-this-matters)

#### Credential Exposure

[Section titled “Credential Exposure”](#credential-exposure)

Your cloud storage credentials are the keys to your kingdom. An AWS access key or a Google OAuth token doesn’t just grant transfer access - it grants **full access** to your storage: read, write, delete, list. If a SaaS provider’s database is breached, your credentials are in that breach.

With [**Blober**](https://blober.io/), credentials never leave your machine. There is no remote database to breach.

#### Data in Transit

[Section titled “Data in Transit”](#data-in-transit)

When a SaaS tool transfers your files, those files pass through their servers. Even with SSL encryption in transit, the data is **decrypted on their infrastructure** before being re-encrypted and sent to the destination. This is not end-to-end encryption - it’s hop-by-hop.

With [**Blober**](https://blober.io/), data flows directly from source to your machine to destination. No hops through third-party infrastructure.

#### Jurisdiction and Compliance

[Section titled “Jurisdiction and Compliance”](#jurisdiction-and-compliance)

MultCloud operates from Hong Kong. Flexify.io from Florida, USA. Each jurisdiction has different data protection laws, government access rules, and breach notification requirements. When your data or credentials live on their servers, you’re subject to their jurisdiction - not yours.

[**Blober**](https://blober.io/) runs on your hardware, in your jurisdiction. No foreign servers. No cross-border data flow through third parties.

#### Subscription as Leverage

[Section titled “Subscription as Leverage”](#subscription-as-leverage)

SaaS tools require active accounts. Cancel your subscription, and you lose access to your workflows, task history, and potentially your configured connections. This creates a soft lock-in that has nothing to do with the quality of the tool.

[**Blober**](https://blober.io/) is a one-time purchase. No account, no subscription, no leverage.

***

### Comparison Summary

[Section titled “Comparison Summary”](#comparison-summary)

| Dimension | SaaS (Flexify, MultCloud) | CLI (rclone) | [**Blober**](https://blober.io/) |

| --------------------- | ------------------------- | -------------------- | -------------------------------- |

| Credentials | Third-party servers | Plaintext local file | ✅ Encrypted local |

| Data path | Through vendor servers | Direct | ✅ Direct |

| Account required | Yes | No | ✅ No |

| Offline capable | No | Yes | ✅ Yes |

| Risk of vendor breach | Exposes your credentials | N/A | ✅ N/A |

| Jurisdiction | Vendor’s country | Your machine | ✅ Your machine |

| Subscription lock-in | Yes | No | ✅ No |

***

### Who Should Care?

[Section titled “Who Should Care?”](#who-should-care)

* **Freelancers and agencies** handling client data - you have a professional duty to control where that data flows

* **Photographers and videographers** with irreplaceable media - GoPro footage, wedding archives, production masters

* **Small businesses** without dedicated security teams - reducing your attack surface matters

* **Anyone under GDPR, HIPAA, or SOC 2 obligations** - third-party data processors require disclosure and contractual agreements

* **Privacy-conscious individuals** who simply want to own their data pipeline

***

### So What?

[Section titled “So What?”](#so-what)

Your migration tool is not a neutral pipe. It’s an active participant in your data flow. Its architecture determines whether your credentials are stored remotely, whether your files transit foreign servers, and whether you maintain sovereignty over your data.

[**Blober**](https://blober.io/) is designed around a simple principle: **your data, your machine, your rules.**

No accounts. No SaaS intermediaries. No credential exposure. One-time purchase, local execution, direct transfers.

[Get Blober →](https://blober.io/)

# Transfer GoPro Cloud Files in 45 Seconds with Blober

> Connect GoPro Cloud, browse your media, select individual files, multiple files, or an entire directory, and create a transfer workflow in 45 seconds.

## 45-Second Demo

[Section titled “45-Second Demo”](#45-second-demo)

This video shows the full process of creating a Blober workflow with GoPro Cloud as the source.

[Play](https://youtube.com/watch?v=TLvZ4Xo9c-g)

## What’s Shown

[Section titled “What’s Shown”](#whats-shown)

### 1. Connect GoPro Cloud

[Section titled “1. Connect GoPro Cloud”](#1-connect-gopro-cloud)

Select **GoPro Cloud** as your source, click **Open GoPro Login**, and sign in. Blober captures your session. No API keys, no config files, no CLI.

### 2. Browse Your Media

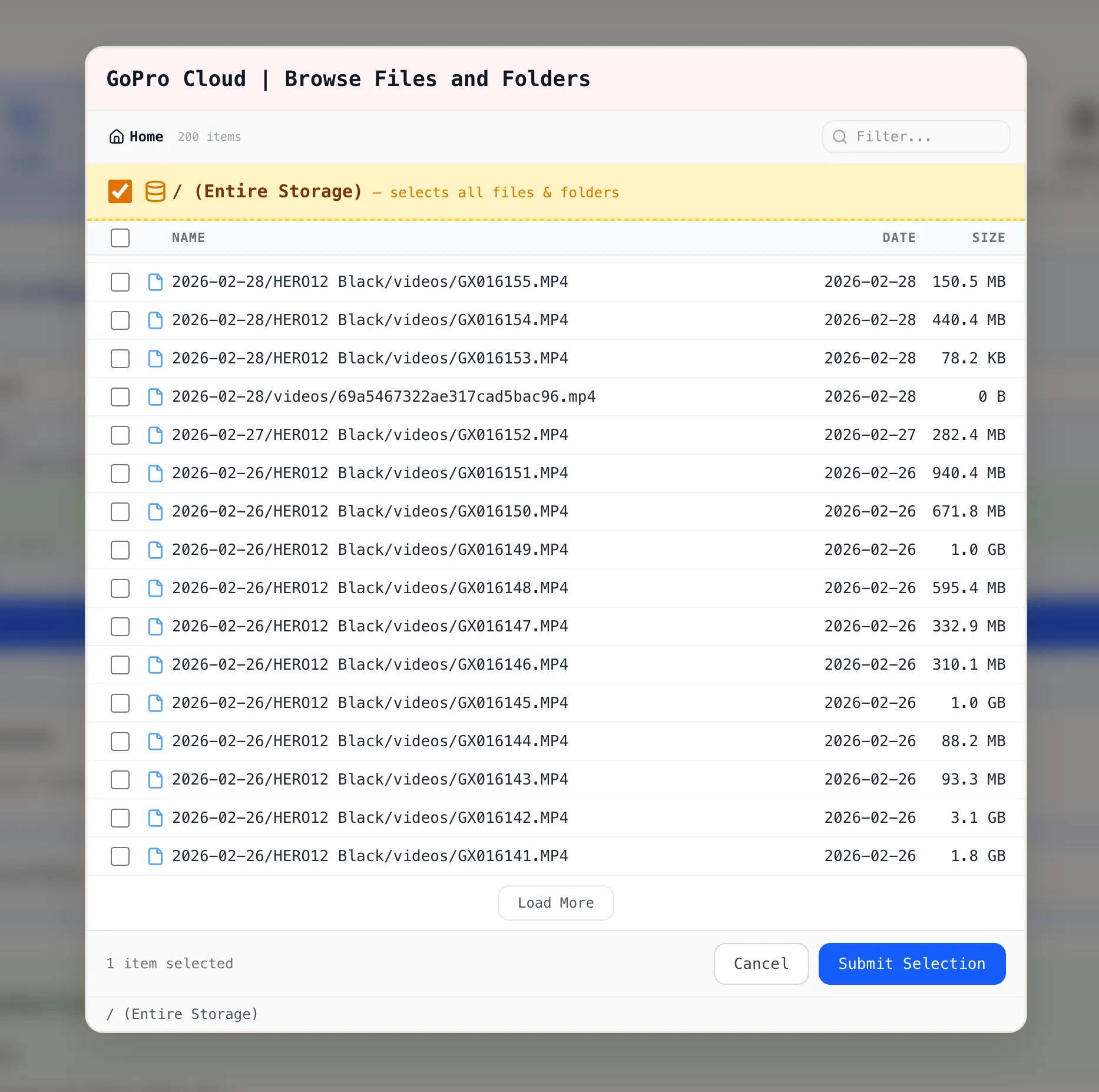

[Section titled “2. Browse Your Media”](#2-browse-your-media)

Click **Browse Files and Folders**. Blober loads your GoPro Cloud library. Files are listed with date and size.

You can select:

* **Individual files** by clicking a single file

* **Multiple files** by checking several files across folders

* **Entire directory** by ticking the **/ (Entire Storage)** checkbox

### 3. Create the Workflow

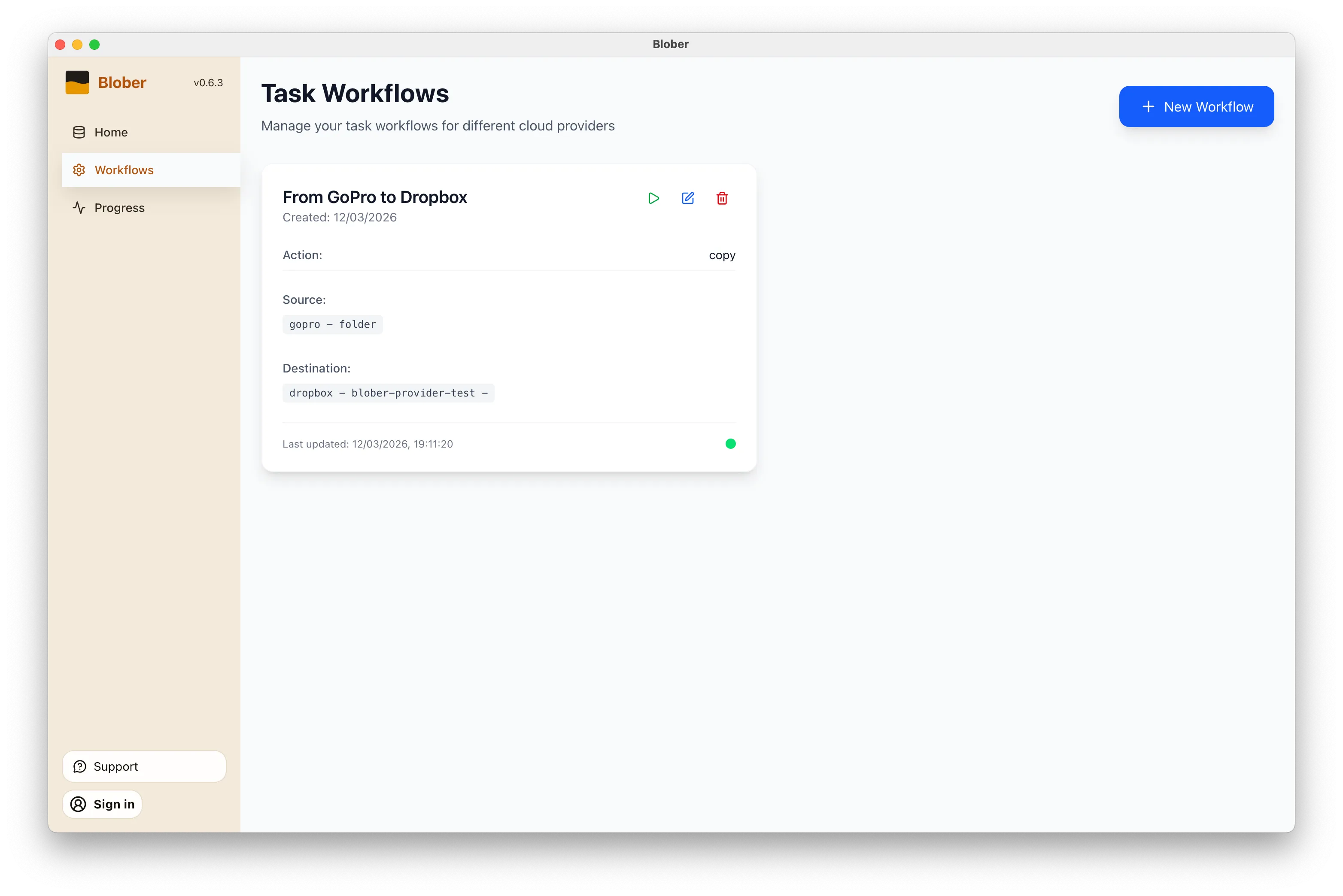

[Section titled “3. Create the Workflow”](#3-create-the-workflow)

Click **Submit Selection**. The workflow editor shows your GoPro Cloud source with the selected items. Pick your destination (local disk, Backblaze B2, AWS S3, Dropbox, or another supported provider), configure options, and click **Save Workflow**.

### 4. Run It

[Section titled “4. Run It”](#4-run-it)

Close the workflow editor with the **X** button in the top-right corner. On the Workflows page, click the green **Run** button on your workflow card. Blober starts the transfer with parallel downloads, progress tracking, and automatic resume.

## Why It Matters

[Section titled “Why It Matters”](#why-it-matters)

GoPro’s web portal limits batch downloads to 25 files at a time, bundled as ZIPs. Large downloads often fail. There is no bulk export and no “Download All” button.

**Blober is the only tool that connects to GoPro Cloud.** rclone, MultCloud, and Flexify do not support GoPro as a source.

* **No manual downloads.** Files move directly from GoPro Cloud to your destination.

* **No file limits.** Transfer 10 files or 10,000 in one run.

* **No subscription.** Blober is a one-time purchase.

* **No middleman.** Everything runs on your machine. Your credentials stay local.

## Get Started

[Section titled “Get Started”](#get-started)

1. [Download Blober](https://blober.io/) (macOS, Windows, Linux)

2. Connect your GoPro Cloud account

3. Create a workflow and run it

# Migrating 100M+ Files from DigitalOcean Spaces to Backblaze B2

> A practical breakdown of costs, timelines, and the smartest approach for moving a massive object storage dataset to Backblaze B2.

### The Scenario

[Section titled “The Scenario”](#the-scenario)

A media company has **25TB** of data spread across **120 million files** in DigitalOcean Spaces. Monthly bill: roughly **$500/month**. They want to move everything to Backblaze B2 to cut costs and get more flexibility.

This is a real-world pattern we see a lot. Let’s walk through what it actually takes.

***

### What It Costs

[Section titled “What It Costs”](#what-it-costs)

| Item | Details | Estimated Cost |

| ----------------------- | --------------------------------------------------- | -------------- |

| **Blober License** | One-time purchase | **$49** |

| **DigitalOcean Egress** | \~24TB billable at $0.01/GiB (first 1TB free) | **\~$240** |

| **Backblaze Ingress** | Free. Backblaze never charges for uploads | **$0** |

| **Backblaze API Calls** | Uploads are free Class A calls, minor listing costs | **\~$2** |

| **Total** | | **\~$291** |

After migrating, the monthly bill drops from \~$500 on DigitalOcean to \~$150 on Backblaze B2. The entire migration pays for itself in about two weeks.

***

### About Egress Fees

[Section titled “About Egress Fees”](#about-egress-fees)

This is where it gets interesting. Backblaze actively wants people to switch to their platform and they back that up with real programs:

* **Free egress up to 3x your average monthly storage** on B2, which means once you’re on Backblaze, downloading your own data doesn’t cost extra in most scenarios.

* **Unlimited free egress** through CDN and compute partners like Cloudflare, Fastly, Bunny.net, and Vultr.

* **Assisted data migration** is listed as a standard B2 feature on their [pricing page](https://www.backblaze.com/cloud-storage/pricing).

* **Universal Data Migration** is available for larger committed contracts (50TB+ on pay-as-you-go, or included with B2 Reserve annual plans).

Backblaze explains their philosophy well in this blog post: [Cloud Egress Fees: What They Are and How to Reduce Them](https://www.backblaze.com/blog/cloud-101-data-egress-fees-explained/). The short version is that they believe egress fees are vendor lock-in, and they want to make switching easy.

Even if your dataset is under the 50TB threshold, it’s worth contacting their [sales team](https://www.backblaze.com/contact-sales/cloud-storage). With a 25TB dataset and willingness to commit for 12 months, there’s a solid chance they’ll help reduce or cover the DigitalOcean egress fees to get you onboarded.

***

### How Long Does It Take

[Section titled “How Long Does It Take”](#how-long-does-it-take)

Let’s be honest here. 25TB is a lot of data.

Every file needs its own set of API calls: list from the source, download, then upload to the destination. Each round-trip carries network latency regardless of file size. When you multiply that per-object overhead across 120 million files with 25TB of bandwidth on top, the aggregate time adds up fast.

For a client-side migration where data streams through your local machine, you’re looking at:

* **Several weeks of continuous runtime** depending on your connection speed and latency

* Your machine needs to stay on and connected the entire time

* If your ISP has a monthly data cap, 25TB will almost certainly exceed it

* 16GB+ RAM recommended for handling the file listing at this scale

This isn’t a Blober limitation. Any client-side tool (rclone, Cyberduck, whatever) will face the same physics. Data has to travel from DigitalOcean’s datacenter to your machine, then from your machine to Backblaze’s datacenter. That’s two full trips through your ISP.

***

### The Smart Approach: Two Phases

[Section titled “The Smart Approach: Two Phases”](#the-smart-approach-two-phases)

#### Phase 1: Let the Datacenters Do the Heavy Lifting

[Section titled “Phase 1: Let the Datacenters Do the Heavy Lifting”](#phase-1-let-the-datacenters-do-the-heavy-lifting)

Contact Backblaze’s sales team and ask about their assisted migration options. For datasets at this scale, they partner with migration services that can move data directly between datacenters at speeds your home connection can’t match. What takes weeks on a home connection can take hours on a datacenter link.

Reach out here: [Backblaze Sales](https://www.backblaze.com/contact-sales/cloud-storage)

#### Phase 2: Use Blober for Everything After

[Section titled “Phase 2: Use Blober for Everything After”](#phase-2-use-blober-for-everything-after)

Once the initial bulk migration is done, [Blober](https://blober.io/) becomes your daily tool for managing files across providers. New uploads, folder syncs, log rotations, moving files between buckets, all handled from your desktop with no per-GB fees and no subscriptions. Your credentials stay on your machine and never touch a third-party server.

***

### Monthly Cost Comparison (Post-Migration)

[Section titled “Monthly Cost Comparison (Post-Migration)”](#monthly-cost-comparison-post-migration)

| | DigitalOcean Spaces | Backblaze B2 |

| ------------------- | ------------------- | -------------------------- |

| **Storage (25TB)** | \~$500/mo | \~$150/mo |

| **Egress (3TB/mo)** | \~$30/mo | Free (within 3x allowance) |

| **Total** | **\~$530/mo** | **\~$150/mo** |

| **Annual** | **\~$6,360/yr** | **\~$1,800/yr** |

That’s about **$4,500 saved per year**, every year.

***

### Bottom Line

[Section titled “Bottom Line”](#bottom-line)

For large-scale one-time migrations, use Backblaze’s own migration programs. They want your business and they’ll often help you get there.

For everything after that, [Blober](https://blober.io/) gives you a one-time $49 license to manage, sync, and move files across any supported provider, with no recurring costs and no third party ever touching your credentials.

# How to Move GoPro Cloud Media to Dropbox the Easy Way

> GoPro Cloud makes it hard to get your own footage out. Blober connects directly to GoPro Cloud and transfers your photos and videos to Dropbox in minutes, with no manual downloads or ZIP files.

## Why Move Your GoPro Footage to Dropbox?

[Section titled “Why Move Your GoPro Footage to Dropbox?”](#why-move-your-gopro-footage-to-dropbox)

GoPro Cloud (included with GoPro Plus / GoPro Premium) stores your camera footage automatically. It’s convenient, until you need to actually do something with it.

**The problems with keeping everything in GoPro Cloud:**

* **No easy bulk export.** GoPro’s web portal limits batch downloads to 25 files at a time, bundled as a ZIP. Large downloads frequently fail or time out.

* **No third-party integrations.** No other file transfer tool (rclone, MultCloud, Flexify) can connect to GoPro Cloud. You’re stuck with the GoPro web interface.

* **Subscription lock-in.** Cancel GoPro Plus and you lose access to your footage. Your media is held hostage by a recurring charge.

* **No redundancy.** If GoPro changes their cloud offering or shuts it down, you have no backup unless you’ve already downloaded everything manually.

**Why Dropbox makes a good destination:**

* **Accessible everywhere.** Desktop, mobile, web. Dropbox works across all devices.

* **Selective sync.** Keep large video files in the cloud and only download what you need locally.

* **Sharing built in.** Send footage to clients, collaborators, or editors with a link.

* **Established and reliable.** Dropbox has been around since 2007 and isn’t going anywhere.

* **Integration with editing tools.** Many video editors and photo apps integrate directly with Dropbox.

Moving your footage from GoPro Cloud to Dropbox gives you a second copy in a provider you control, one that doesn’t depend on a GoPro subscription to access.

## How Blober Makes It Easy

[Section titled “How Blober Makes It Easy”](#how-blober-makes-it-easy)

[Blober](https://blober.io/) is the only desktop app that connects directly to GoPro Cloud. No browser extensions, no manual downloads, no CLI config files. You create a workflow, press play, and your media transfers automatically.

[Play](https://youtube.com/watch?v=NTqqf4sKbpk)

### Step 1: Create a Workflow

[Section titled “Step 1: Create a Workflow”](#step-1-create-a-workflow)

Open Blober, go to the **Workflows** page, and click **New Workflow**. Select **GoPro** as the source and **Dropbox** as the destination. Pick the folders you want to transfer from and where they should land.

### Step 2: Run It

[Section titled “Step 2: Run It”](#step-2-run-it)

Click the play button on your workflow. Blober connects to both providers and starts transferring files immediately. Every file (photos, videos, time-lapses) gets moved directly from GoPro Cloud to Dropbox without touching your local disk first (unless you want it to).

### Step 3: Monitor Progress

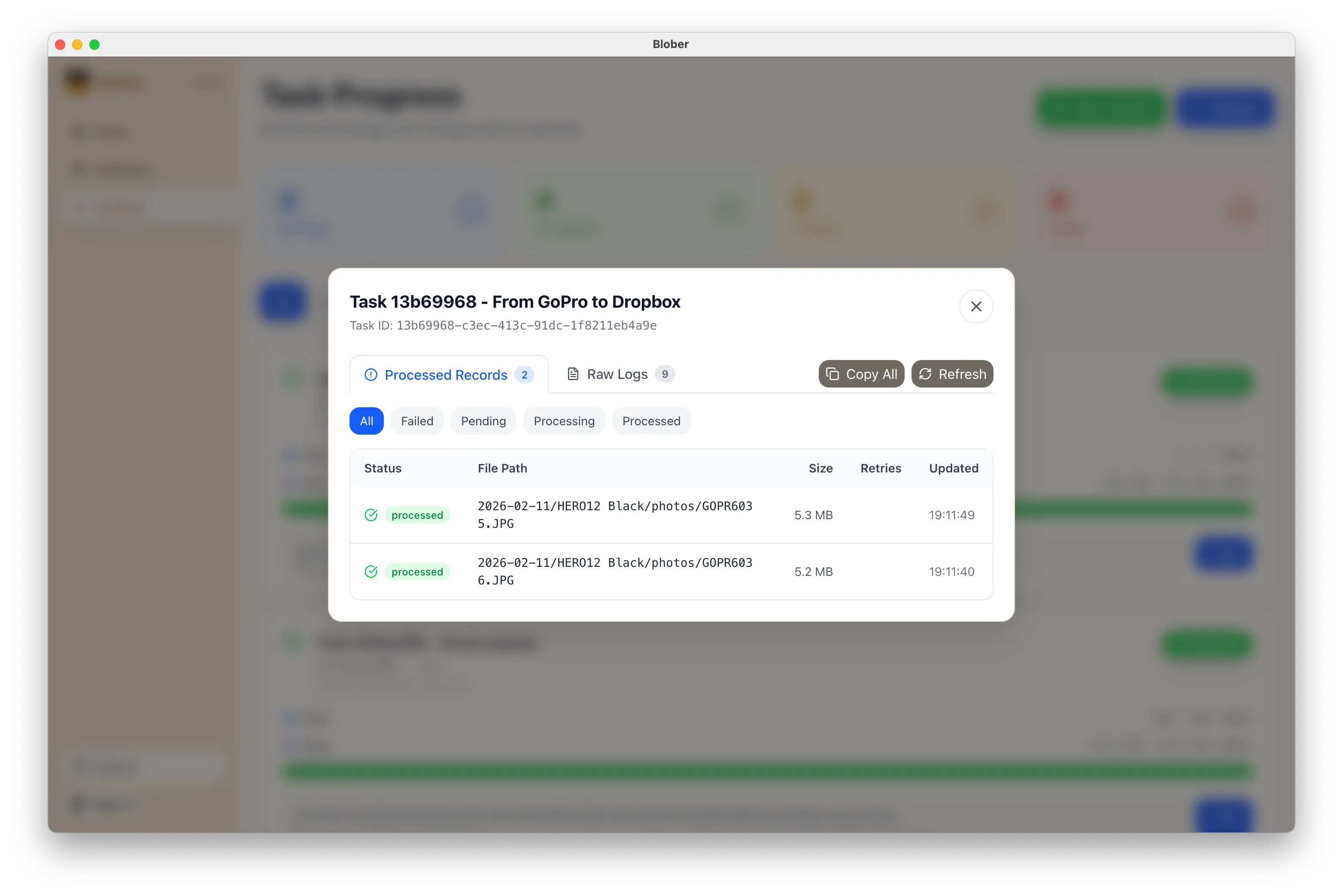

[Section titled “Step 3: Monitor Progress”](#step-3-monitor-progress)

The **Progress** page shows exactly what’s happening: files transferred, bytes moved, current speed, and estimated time remaining. If something goes wrong, you can pause, retry, or cancel at any time.

### What Makes This Different

[Section titled “What Makes This Different”](#what-makes-this-different)

* **No manual work.** You don’t download ZIPs, unzip them, then re-upload to Dropbox. Blober handles the entire pipeline.

* **No file limits.** Transfer 10 files or 10,000. Blober processes them all in one run.

* **No subscription.** Blober is a one-time purchase. No monthly fees, no per-GB transfer charges, no limits on how many times you run a workflow.

* **Runs locally.** Your credentials stay on your machine. Files transfer directly between providers. Nothing passes through Blober’s servers.

## When to Use This

[Section titled “When to Use This”](#when-to-use-this)

* **Before canceling GoPro Plus.** Get your footage out before you lose access.

* **Regular backups.** Set up a workflow now and run it whenever you want a fresh copy in Dropbox.

* **Switching providers.** Moving off GoPro Cloud entirely? Transfer everything to Dropbox first, then cancel.

* **Sharing with a team.** Put footage in a shared Dropbox folder so editors and collaborators can access it immediately.

## Get Started

[Section titled “Get Started”](#get-started)

1. [Download Blober](https://blober.io/) (available for macOS, Windows, and Linux)

2. Connect your GoPro and Dropbox accounts

3. Create a workflow and press play

That’s it. Your GoPro footage in Dropbox in minutes, not hours.

# Stop Paying Rent to Move Your Own Files

> You uploaded terabytes to the cloud. Now your provider charges you to leave. Here's how Blober lets you escape vendor lock-in with a single payment — no subscriptions, no data caps, no CLI.

## The Trap

[Section titled “The Trap”](#the-trap)

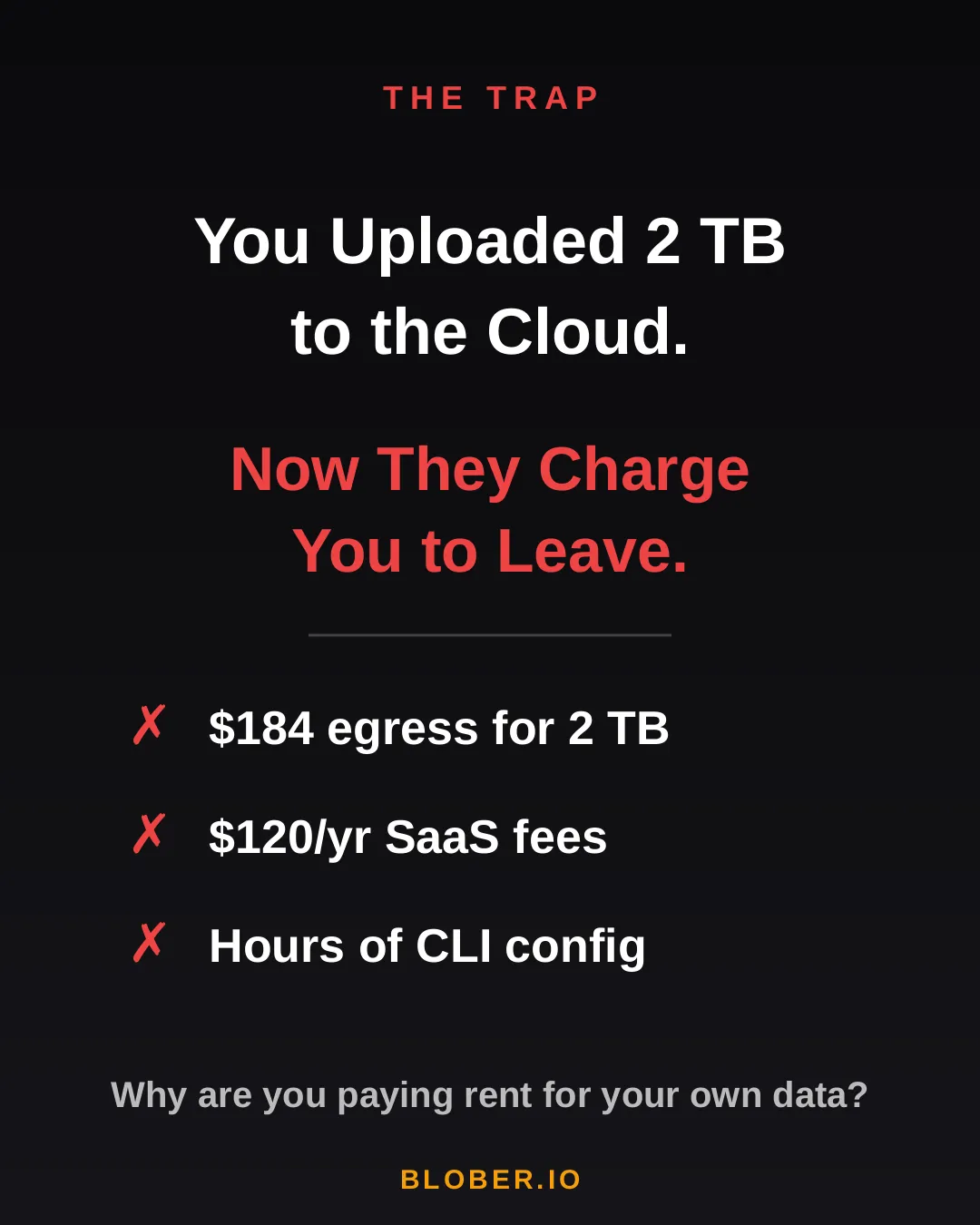

You uploaded 2 TB of photos, videos, and backups to the cloud. Life was good — until you wanted to move them somewhere else.

Suddenly, you’re hit with **egress fees**, per-GB migration charges, and the realization that your cloud provider has been counting on you never leaving. It’s your data. But moving it costs real money — every single time.

AWS charges \~$0.09/GB for egress. That’s **$184 just to download 2 TB of your own files**. Want to use a SaaS migration tool? That’s another $10–20/month, with transfer caps. Prefer the open-source CLI route? Clear your afternoon — you’ll need it for YAML configs, credential files, and provider-specific quirks.

***

## The Math

[Section titled “The Math”](#the-math)

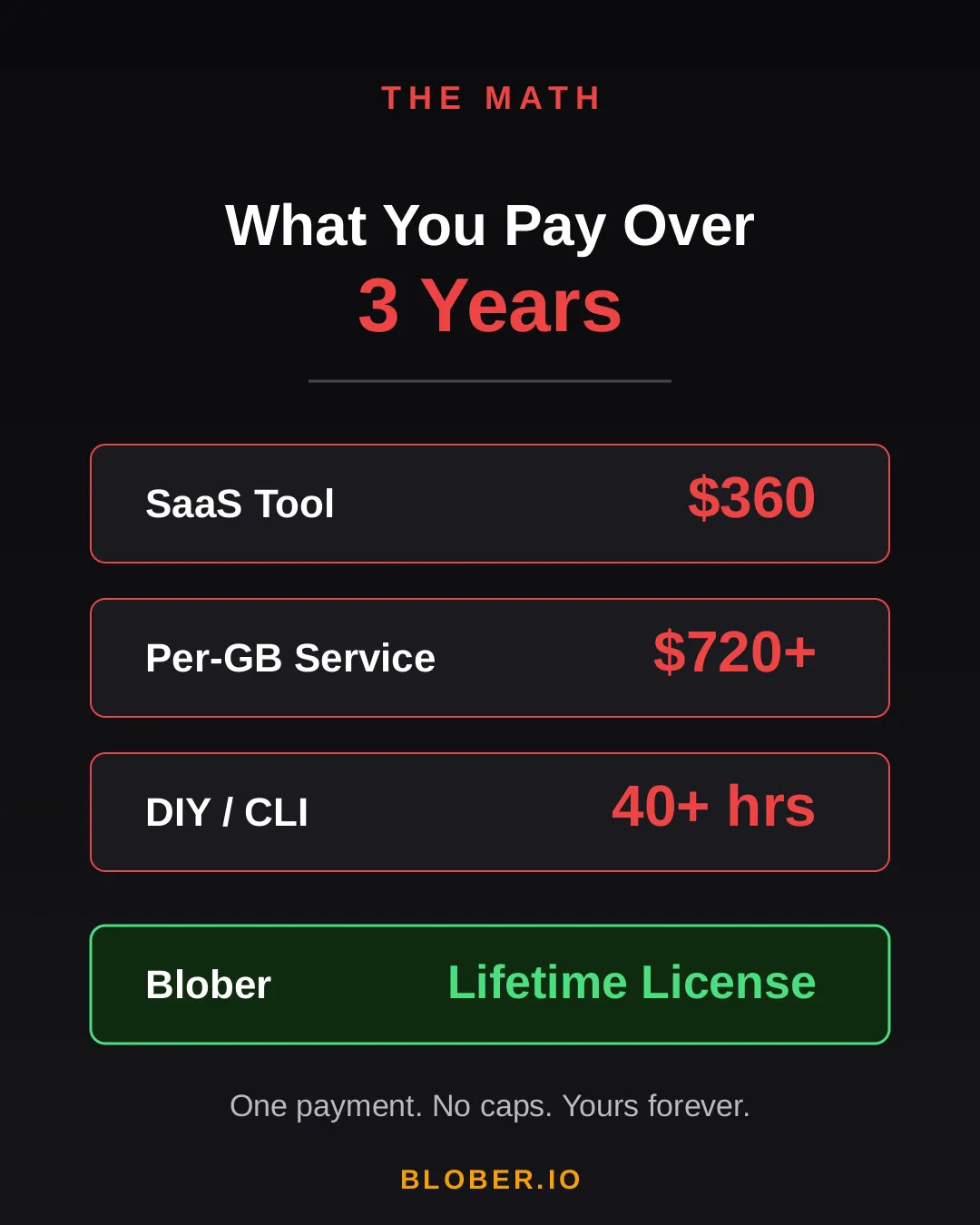

Let’s talk real numbers. Over three years, here’s what you’ll pay using common approaches:

| Approach | 3-Year Cost | Catch |

| ----------------------- | --------------- | ------------------------------------------- |

| **SaaS Migration Tool** | \~$360 | Monthly sub + data caps |

| **Per-GB Service** | \~$720+ | $0.03/GB, billed every transfer |

| **DIY with CLI** | 40+ hours | Config per provider, no UI, breaks silently |

| **Blober** | **One payment** | Unlimited transfers. Forever. |

The subscription model is designed to extract value from you month after month. The per-GB model punishes you for having more data. The CLI path trades money for your time.

Blober breaks the cycle. **Pay once. Transfer as much as you want, as many times as you want.** No meter running. No renewal emails. No “upgrade to unlock more.”

***

## The Escape

[Section titled “The Escape”](#the-escape)

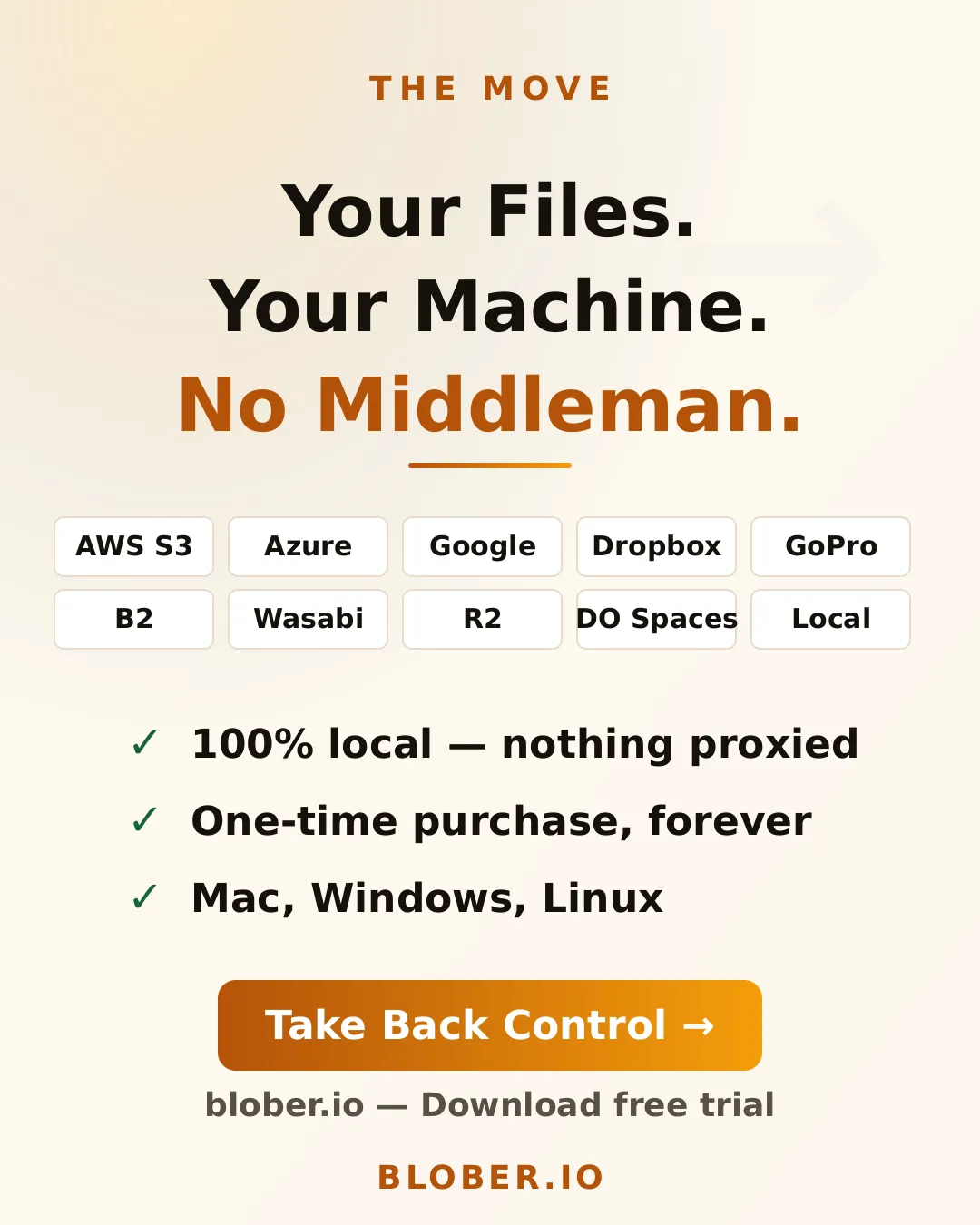

Blober is a desktop app — not a SaaS, not a CLI tool, not a cloud service. It runs on your Mac, Windows, or Linux machine and connects directly to your cloud providers:

* **AWS S3** — buckets and objects, any region

* **Azure Blob Storage** — containers and blobs

* **Google Drive** — files and folders, including shared drives

* **GoPro Cloud** — back up your action footage locally or to any cloud

* **Backblaze B2** — the affordable S3 alternative

* **Dropbox** — personal and business accounts

* **Cloudflare R2** — zero-egress object storage

* **Wasabi** — hot storage without the cold fees

* **DigitalOcean Spaces** — all regions, auto-detected

* **Local Disk** — any folder on your machine

Your files never touch a middleman server. Blober streams directly between your machine and the provider APIs. Browse your cloud storage visually, select what you want, pick a destination — done.

If a transfer gets interrupted (bad WiFi, laptop closed, provider hiccup), Blober picks up where it left off. No re-uploading. No duplicate files.

***

## The Move

[Section titled “The Move”](#the-move)

Here’s what switching to Blober actually looks like:

**Before:** You’re juggling browser tabs, CLI sessions, and a spreadsheet tracking which files went where. A SaaS tool emails you that you’ve hit your 1.2 TB monthly cap. You Google “rclone config azure” for the third time.

**After:** You open Blober. Connect your accounts. Drag from source to destination. Walk away. It just works.

No account required to transfer. No internet needed for local-to-local moves. No data ever leaves your machine unless you’re sending it to a cloud provider *you* chose.

***

## Who Is This For?

[Section titled “Who Is This For?”](#who-is-this-for)

* **Photographers & videographers** moving terabytes of footage from GoPro Cloud or Google Drive to cheaper archival storage

* **Developers & DevOps engineers** migrating between S3-compatible providers without writing scripts

* **Small businesses** consolidating cloud storage without paying an enterprise migration service

* **Privacy-conscious users** who want their files transferred directly, not through a third-party cloud

* **Anyone tired of paying monthly fees** to tools that move files you already own

***

## Get Blober

[Section titled “Get Blober”](#get-blober)

Your data. Your machine. Your rules.

**One payment. Unlimited transfers. No expiration.**

**[Download Blober → blober.io](https://blober.io)**

# The True Cost of Cloud Data Migration in 2026

> Subscriptions, per-GB fees, and hidden costs add up. Here's what cloud data migration actually costs - and why one-time pricing changes the math.

### The Hidden Tax on Moving Your Own Data

[Section titled “The Hidden Tax on Moving Your Own Data”](#the-hidden-tax-on-moving-your-own-data)

Moving data between cloud providers should be simple. You own the files - you just want them somewhere else. But the cloud industry has turned data migration into a profit center, layering fees at every step: egress charges, per-GB migration fees, monthly subscriptions, and data traffic caps.

Here’s what cloud data migration actually costs in 2026, and why [**Blober**](https://blober.io/)’s one-time pricing model is a fundamentally better deal for anyone who transfers data more than once.

***

### The Three Cost Models

[Section titled “The Three Cost Models”](#the-three-cost-models)

#### 1. Per-GB Fees (Flexify.io)

[Section titled “1. Per-GB Fees (Flexify.io)”](#1-per-gb-fees-flexifyio)

Flexify charges a per-GiB fee for every migration, on top of your cloud provider’s egress charges.

| Migration Size | Flexify Fee (\~$0.03/GiB) | Provider Egress (AWS \~$0.09/GB) | **Total** |

| -------------- | ------------------------- | -------------------------------- | ------------- |

| 100 GB | $3 | $9 | **\~$12** |

| 1 TB | $30 | $92 | **\~$122** |

| 10 TB | $307 | $922 | **\~$1,229** |

| 100 TB | $3,072 | $9,216 | **\~$12,288** |

These are *per-job* costs. Run the same migration next month? Pay again. Sync regularly? The meter never stops.

Flexify does offer managed migrations for 10+ TB where provider egress may be avoided through direct peering - but those require contacting sales and negotiating custom pricing.

#### 2. Annual Subscriptions with Data Caps (MultCloud)

[Section titled “2. Annual Subscriptions with Data Caps (MultCloud)”](#2-annual-subscriptions-with-data-caps-multcloud)

MultCloud charges an annual subscription that includes a fixed amount of transfer traffic:

| Plan | Annual Cost | Data Allowance | Cost Per TB Transferred |

| ------------- | ----------- | -------------- | ----------------------- |

| Free | $0 | 5 GB/month | N/A (60 GB/year cap) |

| 1,200 GB plan | $59.99/year | 1,200 GB/year | **\~$50/TB** |

| 2,400 GB plan | $99.98/year | 2,400 GB/year | **\~$42/TB** |

Hit the cap? Transfers stop until you renew. Need to move 5 TB? You’ll need to buy the top-tier plan and wait over two years to exhaust the quota - or pay for multiple years upfront.

Over three years, MultCloud costs **$180–$300** in subscriptions alone, and you’re still capped on how much data you can actually move.

#### 3. One-Time License (Blober)

[Section titled “3. One-Time License (Blober)”](#3-one-time-license-blober)

[**Blober**](https://blober.io/) charges a one-time license fee. No per-GB charges. No annual renewal. No data caps.

| Migration Size | Blober Cost | Provider Egress (your standard cloud fees) |

| -------------- | ------------------ | ------------------------------------------ |

| 100 GB | ✅ One-time license | Standard egress only |

| 1 TB | ✅ Same license | Standard egress only |

| 10 TB | ✅ Same license | Standard egress only |

| 100 TB | ✅ Same license | Standard egress only |

The only variable cost is your cloud provider’s standard egress fee - which you’d pay with *any* tool, including rclone. There is no Blober surcharge.

***

### The Compounding Problem

[Section titled “The Compounding Problem”](#the-compounding-problem)